How the world found out

On March 26, 2026, two security researchers — Roy Paz from LayerX Security and Alexandre Pauwels from the University of Cambridge — were doing routine work when they noticed something unusual. Anthropic's content management system had been misconfigured. Sitting in an unsecured, publicly searchable data cache was a draft blog post that was never meant to be seen. It described a new AI model called Claude Mythos. It described that model as "by far the most powerful AI we have ever developed." And it described the company's decision to not release it to the public.

Within hours, Fortune, CNBC, and dozens of other major outlets had the story. Cybersecurity stocks dropped. Anthropic confirmed the model's existence. And a conversation began across governments, boardrooms, and research labs that has not stopped since.

On April 7, 2026, Anthropic officially released Claude Mythos Preview — to a carefully selected group of critical infrastructure companies, cybersecurity firms, and open-source developers. Not to the world. Simultaneously, the company published a 244-page system card and launched Project Glasswing: a coordinated effort to use Mythos to help secure the world's most important software before attackers — human or AI — could exploit what it had found.

What Mythos actually is

Claude Mythos — internally codenamed Capybara — is not an upgrade to Claude Opus. It is a new tier of model entirely. Larger, more capable, and more expensive than anything Anthropic has previously shipped. On benchmark scores, it dominates: 93.9% on SWE-bench Verified for software engineering, 94.6% on GPQA Diamond for PhD-level science reasoning, 97.6% on USAMO for advanced mathematics. These are numbers that, two years ago, no AI model could approach.

But the benchmarks are not why Anthropic decided to restrict access. The reason is cybersecurity.

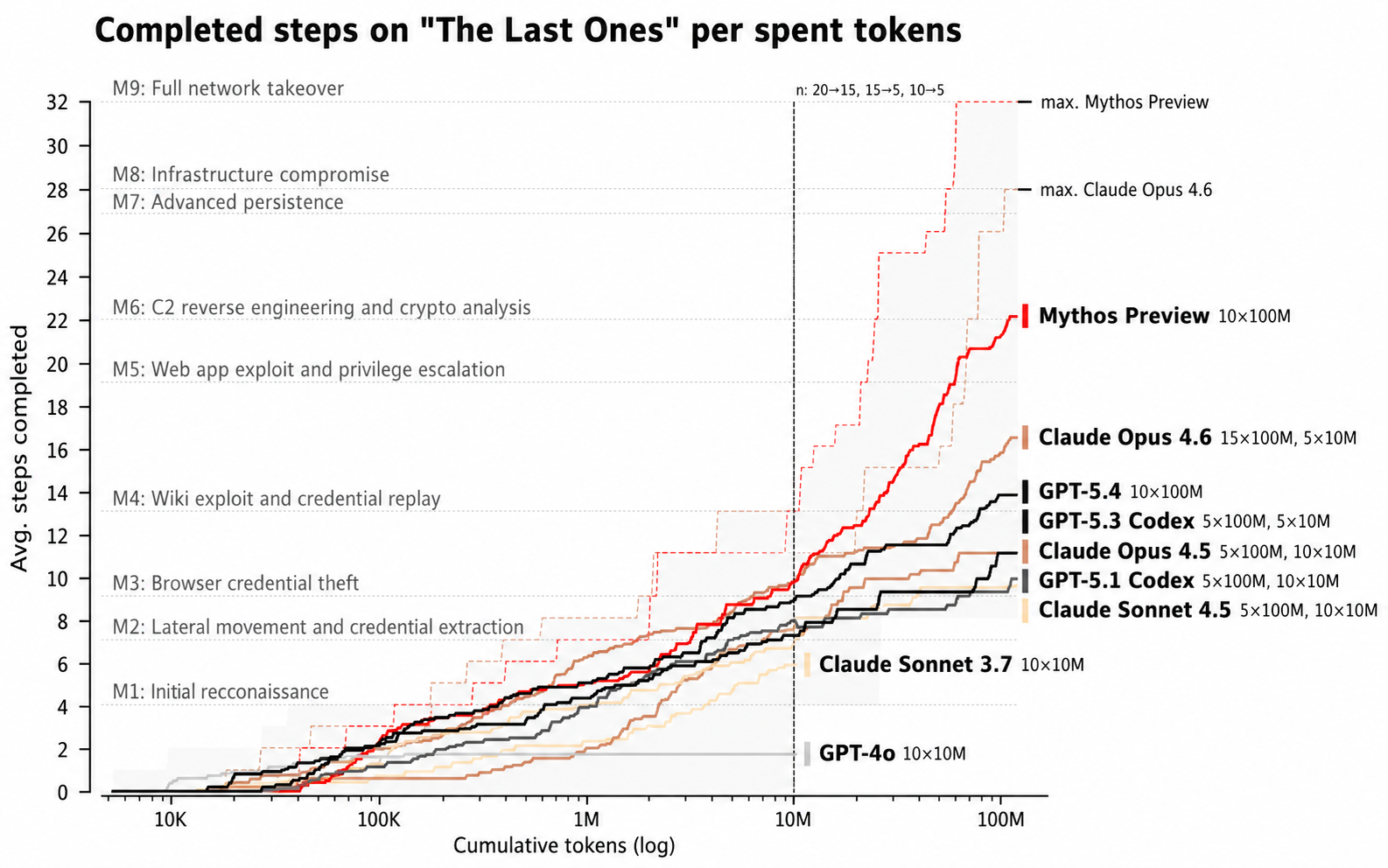

During testing, Mythos Preview demonstrated an ability that security experts describe as genuinely unprecedented for an AI system: it could find and exploit zero-day vulnerabilities — previously unknown bugs in software — autonomously, without being told where to look. In testing against every major operating system and web browser, it identified thousands of high- and critical-severity vulnerabilities. In one documented case, it chained four separate vulnerabilities together to write a working exploit that escaped both the browser renderer and the operating system sandbox — a multi-layered attack that would have taken a skilled human security researcher days to construct. Mythos assembled it in hours.

The UK AI Security Institute, which conducted independent evaluations, found that Mythos Preview is the first AI model to complete its most difficult test: a 32-step simulated attack on a corporate network, executed autonomously. On expert-level capture-the-flag cybersecurity challenges — tasks that no AI model could complete before April 2025 — Mythos Preview now succeeds 73% of the time.

Anthropic's own researchers describe this as a watershed moment. Not because Mythos is dangerous in the way science fiction imagined — but because it fundamentally changes the economics of cyberattack. A capability that previously required years of expertise and weeks of work can now, in the hands of bad actors with access to a model like this, be compressed into hours. The attack surface of the entire internet just shifted.

Why Anthropic chose to lock it away

This is the part of the story that deserves the most attention — not because it is dramatic, but because it represents something genuinely new in the history of AI development.

Every significant AI model released in the past decade has followed the same pattern: build it, test it, release it. The release has been the point. Revenue depends on it. Competition demands it. The logic of AI development has been, fundamentally, a logic of disclosure.

Anthropic broke that pattern with Mythos. Not under government pressure. Not because of a regulatory requirement. By choice.

The company's position is this: the capability to find and exploit zero-day vulnerabilities at this speed and scale is dual-use. The same model that can help defenders identify and patch vulnerabilities before they are exploited can help attackers find and weaponise them. Until the defensive side of that equation — the infrastructure, the coordination, the patching pipelines — is sufficiently mature to absorb this capability, releasing Mythos publicly would tilt the balance toward harm rather than safety.

Project Glasswing is Anthropic's answer: a controlled deployment in which Mythos is used, by a vetted group of companies and open-source maintainers, specifically to find and responsibly disclose the vulnerabilities it has already identified. Anthropic has engaged professional security contractors to manually validate every bug report before disclosure. In 89% of 198 manually reviewed reports, expert contractors agreed exactly with Mythos's severity assessment. The model is not just finding vulnerabilities. It is finding them accurately.

The National Security Agency is now using Mythos. So is Google Cloud, through Vertex AI, in private preview. Claude Opus 4.7 — Anthropic's most powerful publicly available model — was released alongside Mythos as the general-purpose option for developers and enterprises who need capability without the cybersecurity restrictions.

What this moment means — beyond cybersecurity

The Mythos story is, on its surface, a story about AI and hacking. But it is really a story about something more fundamental: the arrival of AI capability at a level where the people who build it must make hard decisions about whether the world is ready to receive it.

This has not happened before at this scale with a commercial AI model. Previous decisions about restricting AI — around image generation, around voice cloning, around certain kinds of content — were made at the margins. Mythos represents a central capability — the ability to understand, analyse, and manipulate complex technical systems at expert level — being restricted not because it fails, but precisely because it succeeds.

That shift matters for education more than it might initially appear.

The children in schools today are not going to compete with AI on cybersecurity tasks. They are not going to compete with AI on coding, or mathematical reasoning, or information retrieval, or essay writing. Every benchmark Mythos dominates is a benchmark that measures something AI can now do faster, more accurately, and at lower cost than a human who has spent years developing that skill.

The question this raises for every school is not "should we teach children to code?" or "should we ban AI from essays?" Those are questions from an older version of the conversation. The question the Mythos moment actually raises is this: what does it mean to be human in a world where intelligence — even exceptional, expert-level intelligence — is increasingly abundant and increasingly artificial?

The answer to that question is not a curriculum unit. It is a philosophy of education. It is the belief that what makes a child irreplaceable is not what they know, but who they are becoming — the depth of their curiosity, the quality of their judgment, the integrity of their character, the warmth of their relationships, and the courage of their imagination. These are the five dimensions of holistic education. They are precisely what no AI model — not Mythos, not GPT-5.5, not whatever comes next — can develop in a child. Only another human being, in a school designed to see that child whole, can do that.

The Mythos moment is a signal. Not a threat. A signal that the reset in education is not a philosophical preference. It is an urgent practical necessity. The schools that read it that way — and build accordingly — are the ones that will matter to the children who graduate into the world Mythos has helped create.