What was launched

On April 24, 2026, DeepSeek — the Hangzhou-based AI startup that rattled global markets with its R1 model exactly a year ago — released preview versions of two new models on Hugging Face: DeepSeek-V4-Pro and DeepSeek-V4-Flash. Both are fully open-source, meaning any developer, school, or research institution can download, run, and modify them freely.

The headline numbers are significant. V4-Pro is a Mixture-of-Experts model with 1.6 trillion total parameters, but only 49 billion are activated per prompt — making it far more efficient than its size suggests. The context window is 1 million tokens, equivalent to roughly 750,000 words, or an entire textbook read in a single pass. V4-Flash is the lighter, faster, cheaper sibling: 284 billion total parameters, 13 billion active, and the same 1 million token window.

What it actually does

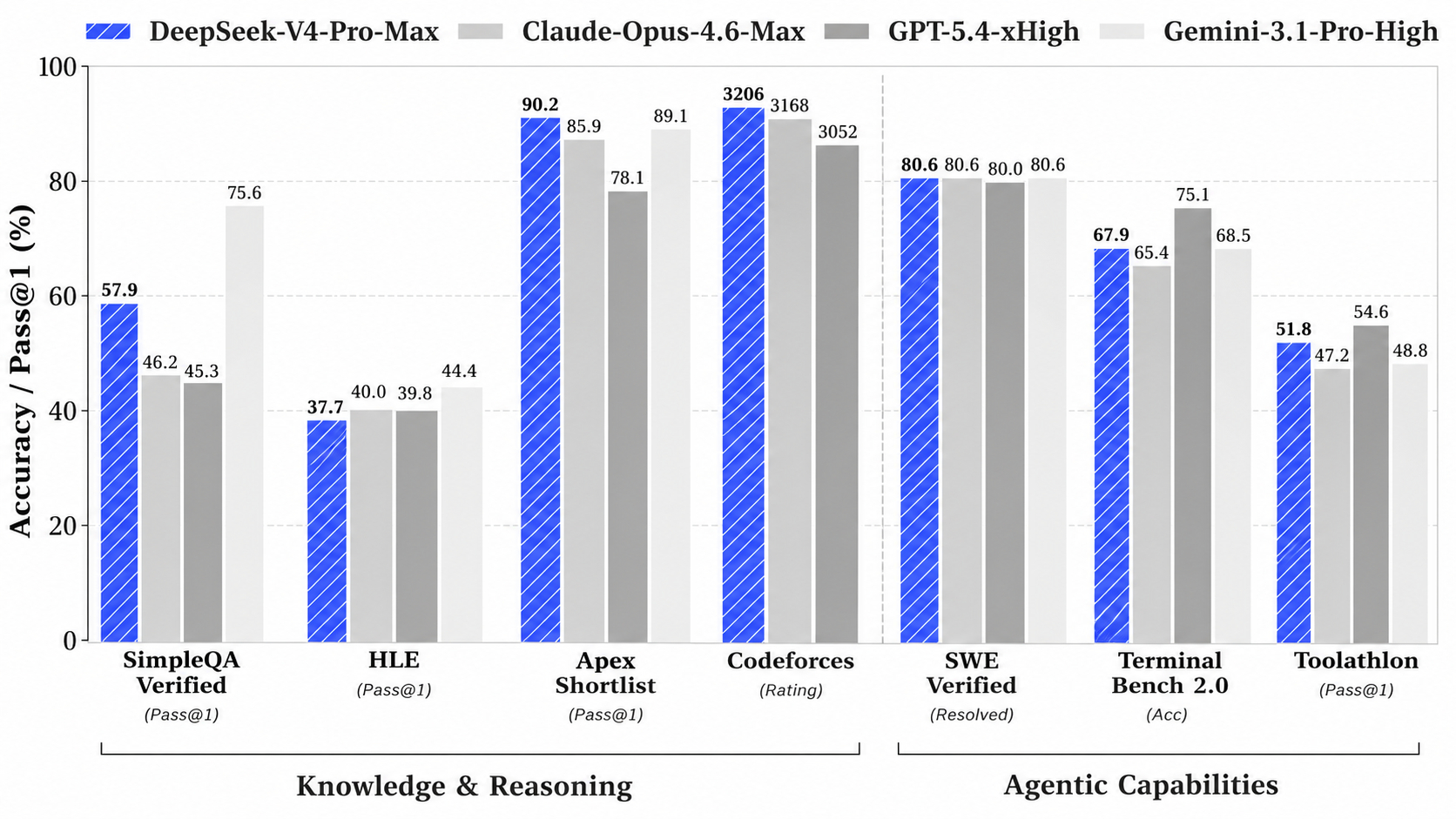

DeepSeek's own benchmarks show V4-Pro leading every open-source model in coding and mathematics, surpassing the previous best on LiveCodeBench with a score of 93.5. On world knowledge benchmarks, it trails only Google's Gemini 3.1-Pro — a closed, proprietary model. The gap to GPT-5.4 and Gemini 3.1 Pro is described as "approximately three to six months" — remarkably close for an open-source model.

The architectural breakthrough is what DeepSeek calls 'Hybrid Attention Architecture' — a combination of Compressed Sparse Attention and Heavily Compressed Attention that dramatically reduces the cost of processing very long conversations. At 1 million tokens of context, V4-Pro uses only 27% of the inference compute that V3.2 required. V4-Flash drops to 10%.

On pricing, the contrast with Western labs is stark. V4-Pro is priced at $3.48 per million output tokens. GPT-5.4 from OpenAI costs $30 for the same. Anthropic's equivalent costs $25. DeepSeek expects prices to fall further as Huawei scales up production of its Ascend 950 chips, which were used to train V4 – a significant milestone for China's domestic AI hardware independence.

Why this matters for AI-ready school

The implications for education run deeper than a benchmark table.

Access. Open-source, frontier-level intelligence at near-zero cost means schools in India, Africa, and Southeast Asia can now build on models that match the best in the world. AI equity — every child deserving the same quality of AI support regardless of where they were born — just got meaningfully more achievable.

Context. A 1-million-token context window means an AI can hold an entire curriculum in mind simultaneously. A student's full year of notes, essays, and assessments could be processed in a single conversation. For tools like Cypher, which tracks how a student thinks and learns over time, models with this kind of memory are the infrastructure that makes genuine personalisation possible at scale.

Sovereignty. When frontier-level AI is freely available for any school to run locally, the question shifts from "Which AI company should we trust with our students' data?" to "How do we build our own, on our terms?" Matrix — AI Ready School's on-premise infrastructure — exists precisely for this moment. Schools with local AI infrastructure are now positioned to run world-class open models entirely within their own walls.

Speed. DeepSeek V4 was built under US export restrictions, using domestic Chinese chips, and arrived within six months of the world's leading models — at a tenth of the price. The lesson for every school leader watching this: the pace of capability improvement is not slowing. Schools that treat AI infrastructure as a permanent, evolving foundation will be the ones that stay relevant.

The number that deserves to sit with you

$3.48 per million tokens versus $30. That is not a pricing footnote. That is a signal about where AI capability is heading — cheaper, faster, more open, and increasingly independent of controlled supply chains. For schools building long-term AI strategy, this is the kind of update that should prompt a conversation, not just a read.