Chiranjeevi Maddala

May 12, 2026

Every generation has a foundational literacy that separates the people who shape the world from the people who are shaped by it. For the generation before yours, it was digital literacy. For this generation, it is something more specific, more demanding, and more consequential. We call it AI-Sense. And almost no school in India is teaching it.

In March 2026, three independent research teams published findings that should have made front pages. The papers, referenced as 2604.04721, 2604.04237, and 2604.04387 in the preprint literature, reached the same conclusion from three different methodological angles: students who use AI tools regularly for academic work show measurable decline in independent reasoning capability over time. Not immediately. Not dramatically. Gradually, in the way that any skill atrophies when it stops being exercised.

The researchers were careful about what they were and were not claiming. They were not claiming that AI is harmful. They were not claiming that students should not use AI. They were claiming something more precise: that AI tools designed to optimise for engagement and output production, used without structured pedagogical guidance, tend to replace the cognitive effort that builds capability rather than support it. The student who uses AI to produce an essay is not developing the thinking that essay-writing is supposed to develop. The student who uses AI to generate code is not developing the computational reasoning that coding is supposed to develop.

This is not an argument against AI in education. It is an argument for a specific kind of AI education that almost no school is currently providing. It is an argument for AI-Sense.

The question is not whether children will use AI. They already do. The question is whether they will understand it well enough to use it without being used by it.

AI-Sense is not a technical skill. It is not the ability to write code, train machine learning models, or understand neural network architectures. Those are valuable capabilities and NEO AI Innovation Lab develops them in students who want to go deeper. But they are not AI-Sense.

AI-Sense is a cognitive orientation toward AI systems. It is the set of habits of mind that allow a person to engage with AI output critically rather than passively, to understand what AI can and cannot do without needing to understand how it does it at a technical level, and to use AI as an instrument of human thinking rather than a substitute for it.

A student with AI-Sense looks at an AI-generated essay and asks: what sources is this drawing on? What assumptions is it making? What perspective is it leaving out? Where is this wrong, and how would I know? A student without AI-Sense reads the same essay and decides whether it sounds right.

A student with AI-Sense uses an AI image generator and asks: what aesthetic choices is this making? Whose visual vocabulary is this trained on? What cannot be expressed through this tool? A student without AI-Sense clicks generate until something looks good.

A student with AI-Sense encounters an AI recommendation — for a product, a piece of content, a decision — and asks: what is this system optimising for? Whose interests does that serve? What am I not being shown? A student without AI-Sense follows the recommendation.

The difference between these two students is not technical knowledge. It is a disposition toward AI systems, a reflex of critical engagement, that must be developed deliberately through educational experience or it does not develop at all. This is what makes AI-Sense a foundational literacy rather than a subject. It is not a class you take. It is a way of thinking you develop through sustained, structured, pedagogically intentional engagement with AI as an object of inquiry.

AI-Sense has five components. Each one is teachable. Each one requires a specific kind of learning experience to develop. And each one is almost entirely absent from the AI education programs that most Indian schools are currently implementing.

The most immediately valuable component of AI-Sense is the ability to evaluate AI output critically. This means more than fact-checking. It means understanding that AI systems have systematic biases that produce systematic errors, that the fluency of AI-generated text is not correlated with its accuracy, that confident-sounding output can be wrong in ways that are not immediately detectable, and that the errors AI makes are often not random but patterned in ways that reflect the training data and optimisation objectives of the system.

A student who has developed output evaluation capability does not trust AI output because it sounds right. They interrogate it. They look for the specific categories of error that AI systems are known to produce: factual errors that are plausible, logical errors that are structurally sound, perspective omissions that are invisible unless you know what is missing, and category errors that arise from training data that does not represent the full range of human experience.

Developing this capability requires repeated practice in comparing AI output to authoritative sources, identifying specific errors and understanding why those errors occurred, and building a personal taxonomy of AI failure modes that becomes more refined with each encounter. This is the kind of learning that happens in Zion's Research Hub when Research Buddy is used the way it is designed to be used: not as an answer machine, but as a research partner whose outputs are always interrogated, always compared, always evaluated against independent sources.

The student who knows where AI tends to be wrong is more valuable than the student who knows how to make AI produce impressive-looking output. The first skill is AI-Sense. The second is AI fluency. They are not the same thing, and only one of them matters in a world where AI fluency is universal.

AI systems have fundamental limitations that are structural rather than accidental. They will not be fixed by the next version. They are properties of what AI systems are, not deficiencies of current implementations. A person with AI-Sense knows what these limitations are and incorporates them into every AI interaction.

The most important limitation is temporal. AI systems are trained on data up to a specific point in time and have no knowledge of events, discoveries, or developments after that point. A student who uses an AI system to research a rapidly evolving field without understanding this limitation is building their understanding on a foundation that may be months or years out of date.

The second fundamental limitation is perspectival. AI systems are trained on human-generated data, and human-generated data does not represent all human perspectives equally. The perspectives that are most represented in the training data have the most influence on the system's outputs. A student who uses AI to understand a contested historical event, a cultural practice, or a social phenomenon without understanding this limitation is receiving a view through a particular lens without knowing the lens exists.

The third fundamental limitation is contextual. AI systems process the context they are given, but they cannot know what context they have not been given. They cannot know that the student asking about agricultural AI tools is from a rural area where the training data conditions do not apply. They cannot know that the medical question being asked involves a demographic that was underrepresented in the clinical data the system was trained on. A student with limitation awareness knows to supply context deliberately and to question whether the system's outputs account for their specific situation.

Limitation awareness is developed through structured encounters with AI failure. This is counterintuitive but essential. Students who only see AI succeed do not develop limitation awareness. Students who are systematically exposed to cases where AI fails, asked to explain why the failure occurred, and asked to identify what the system would have needed to succeed, develop the understanding of AI architecture that produces genuine limitation awareness without requiring technical expertise in how AI systems are built.

AI systems encode the biases of the humans who created them, the data they were trained on, and the objectives they were optimised for. These biases are often invisible to users who have not been trained to look for them. A student with AI-Sense has developed the ability to notice bias in AI output and to ask what systemic factors produced it.

Bias in AI output takes many forms. It appears in whose stories are told and whose are left out in AI-generated historical narratives. It appears in which aesthetic sensibilities dominate AI-generated images and which are systematically underrepresented. It appears in which economic assumptions are embedded in AI-generated financial advice and which are treated as universal when they are culturally specific. It appears in which bodies are represented as default in AI-generated visual content and which are treated as variations from a norm.

Teaching bias recognition requires exposing students to AI output across a range of domains and asking them to notice patterns: whose perspective is centred, whose is absent, what assumptions are being made about the user, and what the system is optimising for. This kind of attention is not technically demanding. It is, however, the kind of attention that must be explicitly taught, because students who have not been taught to look for bias do not notice it. They absorb it.

Cypher's questioning-first design is specifically engineered to develop this kind of critical attention. When Cypher asks a student to evaluate a claim, to identify what evidence would be needed to support a conclusion, or to consider whose perspective is represented in a piece of content, it is not simply teaching analytical skills. It is building the habit of critical attention that is the foundation of bias recognition in AI output.

The fourth component of AI-Sense is the most creative and the most practically valuable for a student's long-term professional life: the ability to design effective collaborations between human intelligence and artificial intelligence that produce outcomes neither could produce alone.

This is distinct from simply knowing how to use AI tools. A student who knows how to use AI tools can produce AI-assisted outputs. A student who understands human-AI collaboration design knows how to structure a working process so that AI handles the dimensions of a task where it has genuine advantages — speed, scale, pattern recognition, information retrieval — while humans retain responsibility for the dimensions where human judgment is irreplaceable: value alignment, contextual sensitivity, ethical evaluation, creative direction, and the kind of integrative thinking that connects disparate domains in ways that no training dataset could predict.

In practice, this means knowing when to use AI and when not to. It means knowing how to brief an AI system with the specificity that produces useful output. It means knowing how to evaluate AI output in the context of the task's requirements rather than in isolation. And it means knowing when AI output has served its purpose as a starting point and when to move beyond it with human judgment that the AI cannot supply.

The NEO AI Innovation Lab develops this capability through its structured project curriculum. When a NEO student builds an open-source AI application, they are not executing a technical specification. They are making a series of design decisions about what the AI component of their system should do, what the human component should do, how the outputs of each should be evaluated, and where the boundary between AI and human judgment should fall. These are collaboration design decisions. They require AI-Sense to make well.

The fifth component of AI-Sense is the one that education systems find most difficult to develop systematically: the capacity to reason about the ethical dimensions of AI systems and AI decisions with the same rigour that is applied to the technical and analytical dimensions.

Ethical grounding in AI is not a set of rules. The rules change too quickly and vary too much across contexts to be useful as a foundation. It is a set of questions that a person with AI-Sense knows to ask in every AI context: Who benefits from this system and who bears its costs? What would this system look like to someone from a different social position than the default user? What happens when this system fails, and who bears the consequences of that failure? What is being given up in exchange for the convenience this system provides? And at what point does the delegation of a decision to an AI system represent an abdication of human responsibility that should not be delegated?

These are not comfortable questions. They do not have simple answers. But they are the questions that the generation who will govern, regulate, design, and live with AI systems in every domain of their lives needs to be able to ask with fluency, precision, and genuine engagement. A student who has been taught to code but not to ask these questions has technical capability without the ethical orientation to deploy it responsibly. A student who has been taught to use AI tools but not to ask these questions is a sophisticated consumer of systems whose implications they cannot evaluate.

AI Ready School's philosophy, expressed through what we call the Thinking 2.0 framework, holds that ethical grounding is not an add-on to AI education. It is the foundation that every other component of AI-Sense rests on.

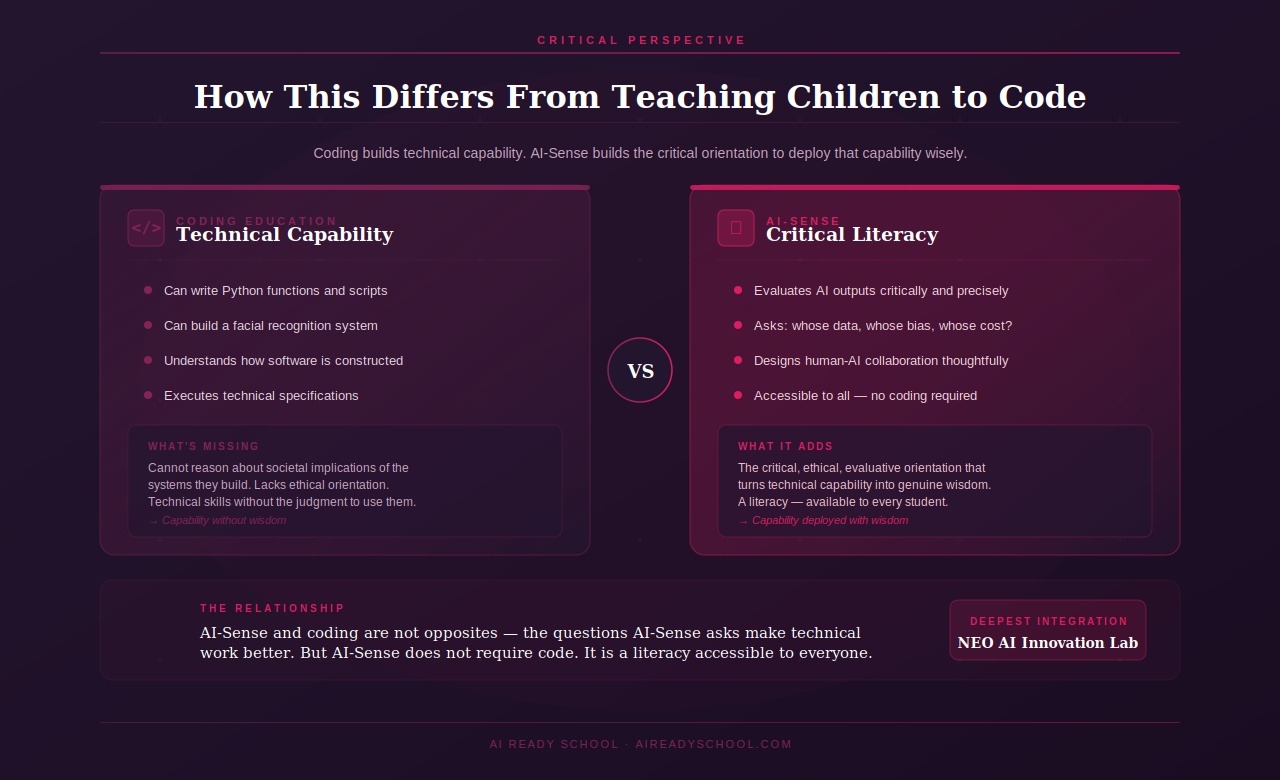

The coding education movement that swept through school systems globally in the 2010s rested on a sound intuition: if software is going to run the world, children who understand software will have more agency in it than children who do not. The implementation of that intuition, however, produced a generation of students who could write Python functions but could not explain the societal implications of the software systems they were taught to build.

Teaching children to code develops technical capability. It does not, by itself, develop the critical orientation toward technology that allows that capability to be deployed wisely. A student who can build a facial recognition system but cannot reason about the implications of deploying it in public spaces, about whose faces it was trained to recognise accurately and whose it was not, about what it means for a government or corporation to have that capability, has technical skills without the judgment to use them well.

AI-Sense is what coding education was missing. It is the critical, ethical, evaluative orientation toward AI systems that allows technical capability to become genuine wisdom about how to deploy that capability in the service of human flourishing rather than at its expense.

The relationship between AI-Sense and coding is not oppositional. The students in NEO AI Innovation Lab who develop the deepest AI-Sense are often the students who are also developing the strongest technical capabilities, because the questions that AI-Sense asks — whose data is this trained on? what is this system optimising for? what does this system assume about its users? — are questions that make technical work better, not just more ethically considered.

But AI-Sense does not require coding. A student who cannot write a line of code can develop sophisticated output evaluation capability, limitation awareness, bias recognition, collaboration design instincts, and ethical grounding. AI-Sense is a literacy, and like every literacy, it is accessible to everyone who is given the right educational environment to develop it.

The most dangerous misconception in AI education right now is the belief that exposure to AI tools constitutes AI education. It does not. A student who uses ChatGPT regularly develops familiarity with ChatGPT. They may develop skill at prompting ChatGPT. They do not, by virtue of that use, develop AI-Sense.

The difference is analogous to the difference between learning to drive and understanding how an engine works. A driver who has no understanding of how an engine works can operate a vehicle effectively in normal conditions. When something goes wrong — when the car behaves unexpectedly, when an unusual situation requires a decision that driving muscle memory cannot supply — the absence of mechanical understanding becomes a limitation. And in the increasingly AI-mediated world that this generation will inhabit, the situations where AI behaves unexpectedly, where an unusual situation requires a decision that tool familiarity cannot supply, will not be rare. They will be constant.

The research published in March 2026 found this pattern empirically. Students who used AI tools regularly without structured pedagogical guidance developed tool familiarity but not the critical evaluation capability that would allow them to recognise when the tool was producing poor output, to identify why, and to compensate with human judgment. Their reliance on AI output increased while their ability to evaluate it decreased. This is precisely the opposite of what AI education should produce.

Cypher's questioning-first design represents the pedagogical alternative. Rather than optimising for output production, Cypher optimises for understanding development. Rather than providing answers that relieve the student of cognitive effort, it asks questions that demand it. The student who has learned with Cypher has not learned to use an AI tool more efficiently. They have learned to think more precisely, to evaluate evidence more critically, and to understand their own knowledge with more accuracy. They have developed AI-Sense in the most fundamental way: by engaging with AI in a context designed to make them think harder, not less.

Teaching a child to use ChatGPT is teaching them to drive. Teaching a child AI-Sense is teaching them to understand the road, the vehicle, and the decisions that must always remain theirs.

AI Ready School's pedagogical approach to AI-Sense development is expressed through what we call the Thinking 2.0 framework. Thinking 2.0 is not a curriculum supplement. It is a philosophical position about what education should produce in students who will live and work in an AI-mediated world.

The framework holds that the traditional educational focus on Thinking 1.0 — the ability to recall, understand, and apply knowledge — is necessary but insufficient for the world this generation will inhabit. Thinking 1.0 capabilities can be substantially replicated by current AI systems. A student whose primary educational development is in Thinking 1.0 is developing capabilities that will be increasingly commoditised over their career.

Thinking 2.0 is the set of capabilities that AI systems cannot replicate: the ability to evaluate AI output critically, to identify what is missing from AI-generated analysis, to ask the questions that an AI system was not designed to ask, to make the value judgments that require human experience and human accountability, and to take the creative risks that require genuine originality rather than pattern recombination.

The five components of AI-Sense are the practical expression of Thinking 2.0. Output evaluation, limitation awareness, bias recognition, human-AI collaboration design, and ethical grounding are the specific capabilities that allow a student to engage with AI as a genuinely thinking person rather than as a sophisticated user. They are the capabilities that the labour market is beginning to price at a significant premium, and that universities are beginning to use as selection criteria, precisely because they are rare — because most schools are teaching children to use AI without teaching them to think about it.

Thinking 2.0 is operationalised across the entire AI Ready School ecosystem. Cypher's Socratic questioning model develops output evaluation and limitation awareness through every learning interaction. Zion's Research Hub develops bias recognition and collaboration design through structured research methodology. The NEO AI Innovation Lab develops all five components through original research, open-source project building, and competition preparation. And Morpheus gives teachers the tools to assess Thinking 2.0 development across their students and identify where specific interventions are needed.

The framework is also explicitly connected to Zion's Thinking Playground — a dedicated tool for Design Thinking, Computational Thinking, and AI Thinking that gives students a structured environment to practise the specific cognitive moves that Thinking 2.0 requires. The Thinking Playground is not a quiz. It is a coaching environment that asks students to apply AI-Sense to real problems, real AI outputs, and real design challenges in a context where the process of thinking is valued alongside the quality of the outcome.

The urgency argument for AI-Sense is straightforward but worth stating precisely, because the urgency is real and the window for action is shorter than most school leaders realise.

The students who are in Grades 6 through 10 in Indian schools right now will enter university and early career contexts between 2028 and 2034. The labour market they will enter is not the labour market of 2024. It is a labour market that a 2026 PwC analysis described as bifurcating between professionals who work with AI in ways that compound their human judgment and professionals who are displaced by AI systems that can replicate the tasks they were trained to perform.

The difference between these two groups is not technical sophistication. The most technically sophisticated AI users in the world are the engineers who build AI systems, and even they are experiencing displacement in the components of their work that are most automatable. The difference is AI-Sense: the capacity to bring human judgment, human creativity, human ethical reasoning, and human contextual understanding to bear on AI-assisted work in ways that the AI component of the collaboration cannot supply.

A student who enters the labour market in 2030 with strong AI-Sense has a compounding advantage. Every AI system they encounter, every AI-assisted decision they make, every AI-human collaboration they design will be shaped by their capacity to think critically about what AI is doing and what it cannot do. This capacity does not depreciate as AI systems improve. If anything, it becomes more valuable, because the more capable AI systems become, the more important it is that the humans working with them can recognise their limitations, identify their biases, and supply the judgment that AI systems cannot.

A student who enters the labour market in 2030 with strong AI fluency but weak AI-Sense is vulnerable. They are effective operators of current AI systems whose operation is increasingly automated. The tasks that require only AI fluency will be the first to be further automated. The tasks that require AI-Sense, which is fundamentally a human cognitive capability that AI systems cannot replicate, will be the last.

The most common question we hear from school leaders who understand AI-Sense is: what does teaching it actually look like? It looks different from any existing subject in the curriculum, and that unfamiliarity can be a barrier to adoption.

AI-Sense education does not look like a computer class. Students are not learning to operate software. It does not look like a coding class. Students are not writing programs. It does not look like a media literacy class, though it shares some of that class's orientation. And it does not look like an ethics class, though ethical reasoning is central to it.

AI-Sense education looks like guided inquiry. Students encounter AI output — an essay, an image, a recommendation, a prediction — and are asked to investigate it: what is this claiming? How do I know if it is right? What is it missing? What assumptions is it making? Who benefits from this claim and who is harmed by it? What would I need to know to evaluate this properly?

These questions are asked repeatedly, across many AI outputs, across many subjects, across many contexts, until the asking becomes automatic. Until a student's first instinct when they encounter AI output is not acceptance or rejection but inquiry. Until AI-Sense is not a class they attended but a way of thinking they have internalised.

This is what Cypher's Socratic model produces through every learning interaction. This is what the NEO AI Innovation Lab produces through its structured research and project curriculum. This is what the Thinking Playground in Zion produces through its guided AI-Sense challenges. And this is what the Thinking 2.0 framework makes possible across an entire school's educational program when it is implemented with the intentionality and structural support that genuine educational change requires.

The generation that develops AI-Sense will not be defined by what AI did for them. It will be defined by what they were able to do with AI that would not have been possible without them. That is the promise of AI-Sense education. And it is a promise that only a school system willing to go beyond tool adoption can deliver.

AI Ready School provides a complete AI ecosystem for K-12 schools, including Cypher (personalised AI learning companion), Morpheus (AI teaching agent), Zion (safe AI tool suite with Thinking Playground), NEO (AI Innovation Labs), and Matrix (sovereign AI infrastructure). Every product in the ecosystem is designed to develop AI-Sense, not AI dependency.

To learn about our Thinking 2.0 framework and how it is implemented across your school's complete educational program, reach out at hey@aireadyschool.com or call +91 9100013885.

Learn About Our Thinking 2.0 Framework