Chiranjeevi Maddala

April 29, 2026

A student who scores 74% on every test is not, by any reasonable definition, a student without learning gaps. They are a student whose gaps are invisible to every instrument the school is using to detect them. This is the problem AI was built to solve — and here is exactly how it does it.

The ASER 2024 report found that 43.3% of Class 8 students in rural India cannot solve a basic division problem. These students have been assessed continuously for eight years. Their teachers have marked their work, their school has tracked their scores, and the system has moved them forward through the curriculum. And yet the gap — a foundational mathematical gap that is now compounding across every subsequent mathematical concept — was never precisely detected.

This is not a failure of teacher commitment. It is a failure of assessment architecture. Traditional assessment is designed to measure whether students can reproduce curriculum content on a given day, under given conditions, in a given format. It is not designed to detect the specific conceptual fracture points where understanding breaks down, the hidden patterns in how a student engages with difficulty that predict future failure, or the precise distance between what a student knows and what they need to know for the next concept to make sense.

These are the gaps that AI detects. Not because AI is smarter than teachers, but because AI is present in every interaction, processes signals that human observation cannot simultaneously track across 40 students, and maintains a persistent model of each student that deepens with every session. The result is a gap-detection capability that is qualitatively different from anything traditional assessment produces.

This blog explains exactly how Cypher and Morpheus detect learning gaps across all four dimensions of the 360-degree student profile — Knowledge, Learning Style, Cognitive Behaviour, and Skills — and what happens when a gap is found. Each section uses anonymised real examples from our partner school implementations to show not what the AI claims to do, but what it actually did, with which specific signals, and what the outcome was.

The gap a test cannot find is the gap that compounds. The gap that compounds is the one that determines a child's trajectory.

Before explaining how AI detects gaps, it is worth being precise about why traditional assessment misses them. There are four structural reasons, each of which corresponds to a specific capability that AI assessment adds.

First: point-in-time measurement. A test measures what a student can do on the day the test is administered. It does not measure whether that understanding was present the week before, whether it will be present the week after, or whether the performance was produced by genuine understanding or by a combination of memorisation, test-taking strategy, and accurate guessing. A student who understood fractions three weeks ago and has since forgotten them due to insufficient consolidation will produce the same test score as a student who never understood them. The test cannot distinguish between these situations. AI interaction data accumulated over weeks and months can.

Second: format dependency. The same student may perform very differently on a multiple-choice test, a short-answer test, an oral assessment, and a project-based assessment of the same content. A student with strong conceptual understanding but weak test-taking skills underperforms on timed examinations. A student with weak conceptual understanding but strong pattern-recognition skills can score well on multiple-choice formats. Traditional assessment that uses only one format is not measuring understanding. It is measuring performance in that format. AI interaction data captures student responses across multiple types of engagement, producing a format-independent picture of understanding.

Third: aggregate scoring. A test score of 72% tells a teacher that the student got approximately 72% of the questions right. It tells them nothing about which 28% were wrong, whether those errors were systematic or random, whether they reflect a single conceptual gap or multiple unrelated misunderstandings, or whether the errors are concentrated in a topic area that is foundational to subsequent learning. AI tracking at the concept level captures not just performance averages but the specific topology of a student's understanding — which concepts are solid, which are fragile, and which are missing entirely.

Fourth: social performance pressure. Many students, particularly at the secondary level, are skilled at appearing to understand something they do not. They copy homework from classmates. They produce correct-looking answers by recognising question patterns without understanding the underlying concept. They ask teachers to re-explain in ways that produce additional explanation rather than revealing specific confusion. In a classroom with 40 students, a teacher cannot maintain sufficiently close attention to every student to detect these performance-without-understanding patterns. AI interaction, particularly Cypher's questioning-first model, creates conditions where this concealment is much harder — because the AI responds to the student's actual reasoning rather than to the appearance of correct output.

Knowledge gaps, in the AI Ready School framework, are not simply topics a student has not yet covered. They are specific points in a student's conceptual network where understanding breaks down — the precise location where a student's knowledge is fragile, missing, or incorrectly constructed.

Cypher maps each student's knowledge as a connected network rather than a list of topics covered. Each concept has connections to the concepts that precede it (prerequisites) and the concepts that build on it (dependents). When a student shows inconsistent performance on a concept, Cypher does not simply flag the concept as a gap. It investigates the network: is the performance inconsistency because the concept itself is not understood, or because a prerequisite concept is missing and the student is compensating with surface-level pattern recognition?

This distinction matters enormously for intervention design. A student who does not understand quadratic equations may need direct instruction on quadratic equations. Or they may need direct instruction on factorisation, without which quadratic equations cannot be genuinely understood. These are different interventions. A test score of 55% on quadratic equations does not tell you which one to use. Cypher's knowledge network analysis does.

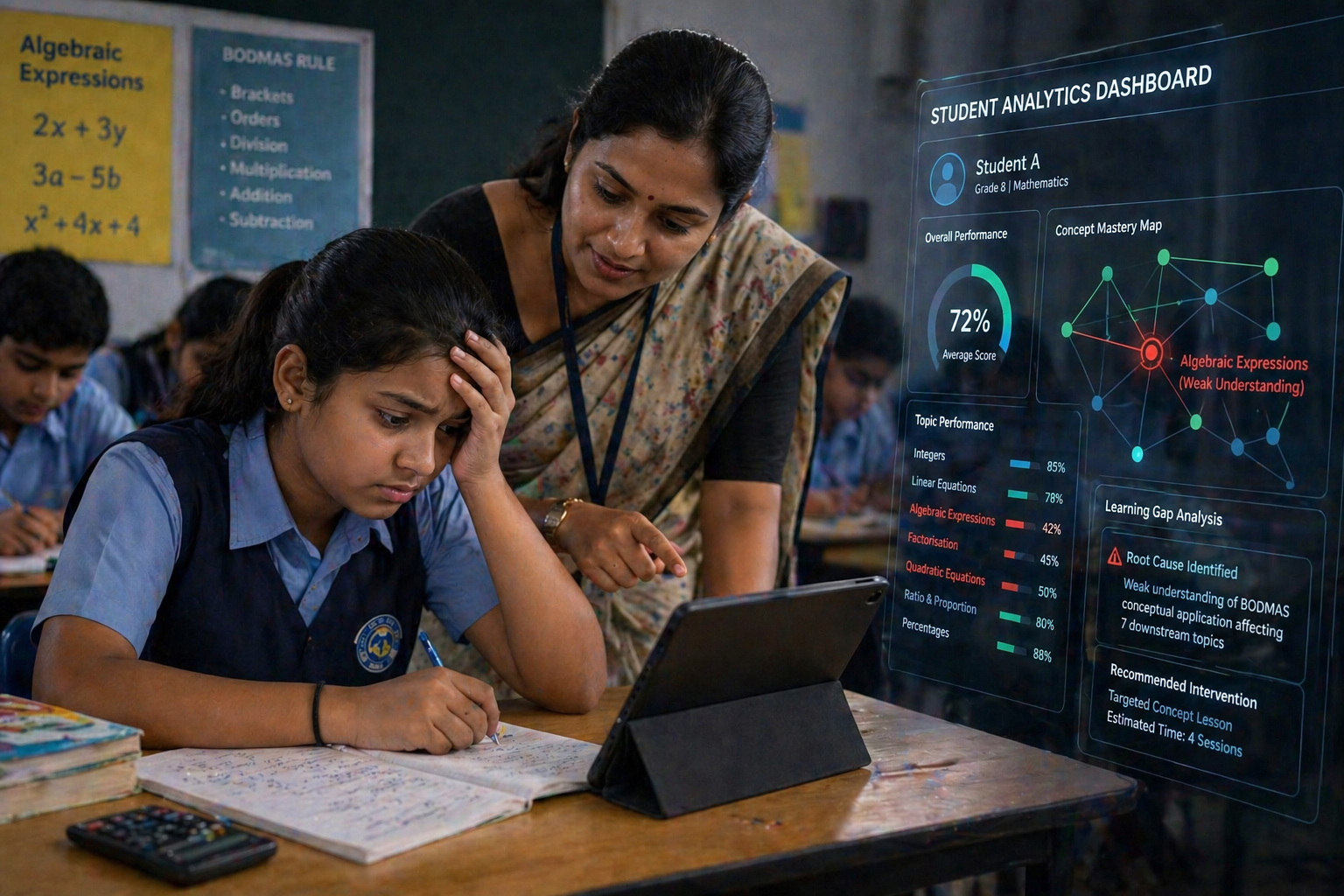

Anonymised case · Grade 8 Mathematics · CBSE · Partner school, Raipur

Student A scored consistently between 68% and 74% on Mathematics unit tests across Class 7 and the first half of Class 8. Teachers considered her a mid-performing student with no specific concerns. Her Morpheus progress dashboard was reviewed as part of a routine academic coordinator check in Week 6 of our implementation.

What the Cypher interaction data revealed when the academic coordinator examined it was not a generalised mathematics difficulty. It was a specific and precise fracture point: Student A's understanding of algebraic expressions, introduced in Grade 6, had a structural error. She had learned to apply the BODMAS rule procedurally but had not developed conceptual understanding of why the rule applied. In Grade 6, this error had no visible consequences — the test questions were simple enough that procedural application produced correct answers.

By Grade 8, however, the same structural error was propagating through every algebraic concept that built on expression simplification. Her 70% average in Grade 8 Mathematics was not a generalised weakness. It was the surface signature of a single conceptual error from Grade 6 that was now affecting seven distinct curriculum topics.

Signal detected: Inconsistent performance on algebraic simplification tasks

Student answered correctly when questions used familiar number patterns, but made systematic errors when variable coefficients changed — indicating procedural rule application without conceptual understanding.

What testing missed: Three unit test scores of 68%, 71%, and 73% across Class 7 — within normal range, no flags raised, no intervention triggered.

The intervention, guided by the Cypher gap detection and delivered through a targeted Morpheus lesson that the teacher created specifically to address BODMAS conceptual understanding rather than procedural recall, took four sessions. Student A's subsequent performance on the seven affected topics improved by an average of 18 percentage points over the following six weeks. The Grade 6 fracture was fixed in Grade 8. The consequence would have been a progressively worsening Grade 9 and Grade 10 mathematics performance — invisible on current assessments, catastrophic in board examinations.

Learning style gaps are a category that most assessment systems do not track at all, because most assessment systems use a single format for all students and interpret performance differences as differences in ability rather than differences in format fit. This conflation — treating format-dependent performance as an ability measure — is one of the most consequential errors in educational assessment.

Cypher tracks not just what a student knows but how they most effectively develop understanding. This is not the fixed learning style typology (visual, auditory, kinaesthetic) that research has shown to be oversimplified. It is a dynamic, context-specific profile of which instructional approaches produce the most durable understanding for this student, for this type of concept, in this subject. A student may develop strong understanding of abstract mathematical concepts through visual representation, while developing stronger understanding of historical causation through discussion and argument. These are not contradictory. They are subject-specific and concept-type-specific learning pattern data.

Anonymised case · Grade 5 Science · ICSE · Partner school, Hyderabad

Student B was assessed as significantly below grade level in Science based on written test performance across Classes 3, 4, and 5. He had been recommended for additional support sessions three times. Two learning support teachers had worked with him over 18 months with limited visible progress. The school was beginning to consider whether he might need a formal learning needs assessment.

When Student B began interacting with Cypher, the platform's learning style tracking produced a striking finding within the first three weeks. Student B's comprehension of scientific concepts was, in fact, at or above grade level — when those concepts were presented through concrete physical examples, followed by discussion, before any written or diagrammatic representation was introduced. When the sequence was reversed (written explanation first, then example), comprehension dropped significantly. When the concept was presented only in written form without a concrete example, comprehension was near zero.

His test scores were measuring not his scientific understanding but his ability to extract meaning from written explanations — a skill that is separate from scientific understanding and that he had not yet developed to a level that allowed his actual scientific comprehension to be visible through the test format the school was using.

Signal detected: Comprehension score 340% higher on concrete-first vs. abstract-first presentation sequences

Over 47 Cypher sessions, Student B consistently demonstrated strong retention when physical or real-world examples preceded formal explanation. When formal explanation came first, interaction patterns showed repeated re-engagement with the same concept — indicating the explanation was not being understood.

What testing missed: Three years of written Science tests showing 35–45% average performance — interpreted as general Science difficulty. No format-specific assessment was ever administered.

The intervention did not require additional learning support. It required the teacher to modify the instructional sequence for this student — presenting concrete examples before written explanations in all Science content. Cypher's subsequent interactions were calibrated to this sequence automatically. Within eight weeks, Student B's in-class responses and Cypher interaction performance were at grade level. His written test performance improved more slowly, because written format processing is a separate skill that required separate, targeted development. But the gap that had been classified as a Science gap was correctly identified as an instructional format gap — and treated accordingly.

Cognitive behaviour gaps are the category that most directly predicts long-term academic outcomes — and the category that traditional assessment is least equipped to detect. They include patterns of engagement, persistence, self-monitoring, and metacognitive awareness that determine not just current performance but the developmental trajectory that current performance will produce.

A student whose cognitive behaviour profile shows declining persistence across a term is a student at risk, even if current test scores are stable or rising. The declining persistence means that the student is exerting less cognitive effort per task over time — a pattern that produces short-term stability (because the remaining effort is concentrated on tested topics) and long-term deterioration (because the reduced engagement is producing weaker, more fragile knowledge that will fail under examination conditions).

Cypher tracks cognitive behaviour through the patterns it observes in every student interaction: how long a student engages with a difficult problem before disengaging or requesting a scaffold, whether students show metacognitive awareness (noticing they do not understand something before being told), whether student questions show curiosity that extends beyond the immediate task, and whether student engagement patterns change across different conditions — time of day, subject, concept difficulty level, or proximity to examination periods.

Anonymised case · Grade 10 · Mixed subjects · CBSE · Partner school, Bangalore

Student C was one of the highest-performing students in her class — consistent 88–92% average across all subjects in Classes 8, 9, and the first half of Class 10. She was considered a low-intervention, high-capability student. Her teacher's attention was directed toward students performing below grade level.

The Morpheus monitoring dashboard flagged a cognitive behaviour pattern in Week 9 of the implementation that the teacher had not observed: over the preceding six weeks, Student C's average time-on-task before requesting scaffolding had dropped from 8.2 minutes to 2.7 minutes. Her correct answer rate had remained stable because she was applying the scaffold efficiently once received. But she was no longer attempting difficult problems independently for meaningful durations. She had, at some point in the six-week window, shifted from a student who worked through difficulty to a student who used the AI to bypass it.

In a high-performing student, this pattern is particularly dangerous because it is invisible on test scores. Student C's 90% average was masking a significant erosion of productive struggle behaviour that would, if uncorrected, produce sharp underperformance in the Class 10 board examination — where AI assistance is not available and the paper is specifically designed to reward the kind of sustained independent engagement that Student C was no longer practising.

Signal detected: Persistence duration dropped 67% over 6 weeks — from 8.2 to 2.7 minutes average before scaffold request

Correct answer rate remained stable across the same period — indicating that performance metrics had completely decoupled from engagement quality. Test scores could not have detected this pattern.

What testing missed: Five consecutive test scores of 89%, 91%, 88%, 90%, and 92% — showing no variation that would flag a concern. Zero teacher attention directed to this student.

The intervention in this case was not content-based. It was interaction-design-based. Cypher's scaffold delivery was adjusted to require a longer independent engagement window before assistance was available for this student specifically. The teacher was alerted through the Morpheus dashboard and had a targeted conversation with Student C about the pattern — not accusatory but direct: "The data shows you are getting the right answers, but you are not spending as much time working on difficult problems yourself as you were earlier in the term. That matters for your board examination."

Student C's subsequent board examination performance was among the top five in her school. The six-week pattern, detected and corrected two months before the examination, did not determine the outcome. It would have, undetected.

The most dangerous gap in a high-performing student is not one that shows up in their scores. It is one that shows up in how they earned those scores.

Skills gaps are the dimension most directly connected to what NEP 2020 and the broader education reform agenda identify as the primary outcome gap in Indian education: the difference between students who can recall and reproduce curriculum content and students who can genuinely use knowledge across contexts, communicate findings clearly, think analytically under conditions of uncertainty, and collaborate productively on complex problems.

Traditional examinations are not designed to assess these skills. Even at the highest cognitive levels of board examination papers, the assessment is conducted within a familiar format, under time-limited conditions, and with questions that, while demanding, are drawn from a known and bounded domain. A student can develop strong board examination performance without ever developing the analytical communication, creative application, and collaborative problem-solving skills that university and career contexts will require.

Cypher tracks skill development through four categories: analytical skills (pattern recognition, evidence evaluation, logical inference), communication skills (clarity of written expression, structural coherence of explanations, adaptation of explanation to context), creative skills (novel connection-making, original approach generation, unexpected application of known concepts), and collaborative skills (build-on-others, productive disagreement, contribution to shared outcomes). These are tracked through the student's interaction patterns across all subject areas and across Zion tool activities.

Anonymised case · Grade 9 · Multi-subject · ICSE · Partner school, Chennai

Student D performed at the 85th percentile of his class on all formal assessments. His teachers described him as academically strong. His parents had high expectations for his performance in the Class 10 ICSE examination. A skills gap analysis was generated as part of a routine mid-year portfolio review for all Grade 9 students.

The skills profile that emerged from nine months of Cypher interaction data was striking in its specificity. Student D's analytical skills were in the top quintile for his class — he was excellent at identifying patterns, evaluating evidence, and drawing logical conclusions from information. His knowledge domain was strong. His examination performance accurately reflected these genuine strengths.

But his communication skills profile showed a significant and systematic gap: Student D could identify a correct conclusion consistently, but could not explain his reasoning in a form that would be understandable to a reader who did not already know the answer. His written explanations assumed shared knowledge rather than constructing it for the reader. His answers were correct but opaque. Under ICSE examination conditions, where extended writing and explanation are significant components of the paper, this gap — invisible in class discussions where the teacher supplied the missing context — would produce a substantial under-representation of his actual capability.

Signal detected: Communication clarity score in 7th percentile for class despite analytical performance in 82nd percentile

Across 340 Cypher interactions involving written explanation tasks, Student D's responses consistently showed correct conclusions with incomplete reasoning chains. Evaluators reading his explanations without prior knowledge of the topic would understand the conclusion but not the path to it.

What testing missed: All 12 formal assessments showed strong performance interpreted as general academic capability. No assessment instrument required extended independent explanation that would have revealed the communication gap.

The intervention combined targeted Morpheus lessons focused on structured explanation — the teacher created a sequence of "explain this to someone who has never heard of it" tasks — with specific Cypher interaction adjustments that asked Student D to fully justify every conclusion he reached rather than stating it. The Zion Research Hub's structured writing tools were incorporated to give Student D a framework for organising explanations. Over 14 weeks, his communication clarity score moved from the 7th to the 41st percentile for his class. His ICSE board examination performance on extended writing questions was one of the strongest in his school.

Gap detection is only as valuable as the speed and specificity with which it produces actionable alerts for teachers. A gap that is identified in a data analysis conducted once per term has already allowed the compounding effect to run for months. A gap that is identified in real time and flagged to the teacher before the next classroom session can be addressed before it compounds at all.

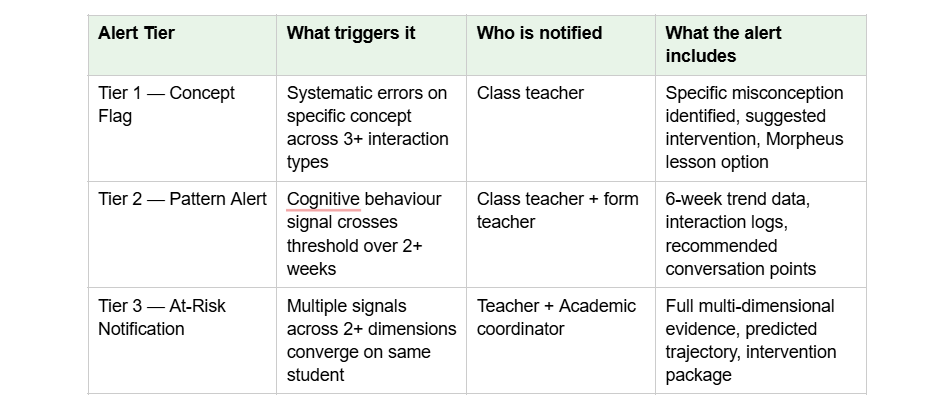

The Morpheus monitoring dashboard implements a three-tier alert system based on the signals Cypher captures.

Tier 1 — Concept-level flags: When a student shows inconsistent performance on a specific concept across multiple interaction types, Morpheus flags the specific concept and the type of inconsistency. The teacher sees: "Arjun is showing systematic errors on fractions when the denominator is a variable — this is a specific gap, not a general fractions difficulty." The flag includes a suggested intervention approach and, optionally, a Morpheus-generated lesson targeting the specific misconception.

Tier 2 — Pattern alerts: When cognitive behaviour signals cross a threshold — persistent time-on-task below a teacher-configurable level, repeated disengagement after a specific topic type, sudden change in engagement patterns — a pattern alert is generated. These are higher priority than concept flags because they indicate a systemic change rather than a specific knowledge gap. Pattern alerts include the six-week trend data, the specific interaction logs that triggered the alert, and recommended teacher conversation points.

Tier 3 — Student-at-risk notifications: When multiple signals across dimensions converge on the same student — a knowledge gap compounded by declining persistence compounded by a communication skill deficit — a student-at-risk notification is generated for the teacher and, where appropriate, for the academic coordinator. These notifications include the full multi-dimensional evidence, the predicted trajectory if no intervention occurs, and a recommended intervention package that spans content, instructional approach, and behaviour support.

What Academic Heads, Special Educators, and Assessment Coordinators Do With This Data

For academic heads: the gap detection system changes the nature of intervention decisions from reactive to proactive. Currently, most schools identify students for intervention when their examination scores fall below a threshold. This means the intervention begins after the gap has already compounded to a level that is visible in summative assessment. With real-time gap detection, intervention decisions are based on the earliest possible signal — before the gap has compounded, when remediation is fastest and most effective. The Morpheus dashboard gives academic heads a school-wide view of gap concentrations: which concepts are producing the most gaps across the cohort, which teachers need support in identifying specific misconceptions, and which student groups need priority attention.

For special educators: the multi-dimensional gap profile is the most specific and most useful input to a special education assessment that any school has yet produced. A student referred for special education assessment because of consistently low test scores often has needs that are far more specific than "general learning difficulty." The Cypher interaction data produced over months of structured engagement provides the kind of detailed, concept-level, modality-specific, behaviour-pattern evidence that special educators need to distinguish between learning differences that require specific instructional accommodation and learning gaps that require specific content remediation. In many cases, what appears to be a learning difficulty is a format mismatch — as in the case of Student B above. In other cases, genuine learning differences are present but have been masked by compensatory strategies that prevent accurate identification. The AI interaction data removes the masking.

For assessment coordinators: the gap detection system provides the evidential foundation for a formative assessment program that genuinely meets NEP 2020's requirements. Assessment coordinators can use the Morpheus alert data to design targeted formative assessment instruments for the specific concepts and cohorts where gaps are concentrated. They can track gap resolution across the cohort to evaluate whether interventions are working. And they can use the multi-dimensional skills data to build the portfolio-based assessment records that PARAKH and NEP's holistic progress card framework require — evidence of genuine capability development across knowledge, skills, and cognitive behaviour, not just examination score trajectories.

Every one of the four cases described in this blog represents a student whose educational trajectory was being determined by a gap that traditional assessment could not detect. Student A's Grade 6 algebraic misconception was shaping her Grade 8 and would have shaped her Grade 10 performance. Student B's format mismatch was producing a three-year misdiagnosis of scientific capability. Student C's persistence erosion was invisible in her exam scores but present in her interaction data. Student D's communication skill deficit was masked by his analytical strength.

In each case, the AI did not do anything that a sufficiently attentive, sufficiently well-informed, sufficiently present human teacher could not have done. It did something that no teacher managing 40 students, five subjects, administrative responsibilities, and their own professional exhaustion can do: it maintained complete, accurate, real-time attention to every signal from every student in every interaction, continuously, without forgetting what it observed the week before.

That is not a replacement for the teacher. It is an instrument that gives the teacher what they have always needed but never had: the data to know, specifically and confidently, which of their 40 students is at risk, why, and what to do about it before it is too late.

The NEO AI Innovation Lab extends this gap detection into the skills domain that traditional schooling almost never assesses — AI research capability, original project building, and portfolio-based competency demonstration. The Matrix infrastructure ensures that all of this detection, all of this data, and all of this intervention history stays within the school's governance — available to teachers, accessible to parents, auditable by coordinators, and never used for any purpose other than the development of the child it was collected about.

The gap that goes undetected does not disappear. It compounds. AI does not find every gap. But it finds the ones that would otherwise remain invisible until they determine a child's future.

To see Cypher's gap detection and the Morpheus alert dashboard in action with your school's specific student population and curriculum context, we invite you to see gap detection in action with our implementation team.

AI Ready School provides a complete AI ecosystem for K-12 schools, including Cypher (personalised AI learning companion with multi-dimensional gap detection), Morpheus (AI teaching agent with real-time alert dashboard), Zion (safe AI tool suite), NEO (AI Innovation Labs), and Matrix (sovereign AI infrastructure).

To discuss gap detection implementation at your school: hey@aireadyschool.com or +91 9100013885.