Chiranjeevi Maddala

February 14, 2026

Every time a student opens an AI learning tool in your school, data is being generated. The question is not whether it is being collected. The question is whether your school has decided what happens to it.

India's Digital Personal Data Protection Act (DPDP Act 2023) is now in force. Schools that deploy AI tools bear direct responsibility for the collection, storage, and processing of student data. Yet most institutions have no formal AI data policy in place. This is the gap this blog addresses.

In the Raipur pilot study, schools using the privacy-focused platform from AI Ready School saw student learning outcomes improve by 34%, teacher efficiency increase by 57%, and school-wide academic performance rise by 77%, all within a framework of verifiable data protection.

The Digital Personal Data Protection Act (DPDP Act 2023) is India's foundational data privacy law. For the first time, it establishes enforceable obligations for organisations that process the personal data of children and minors.

Schools fall squarely within its scope. Any institution using an AI-powered learning platform, assessment tool, or student analytics dashboard is processing personal data under the definition of the Act.

• Verifiable parental or guardian consent before collecting data of minors (under 18)

• Clear, plain-language notice about what data is collected and why

• Data must be used only for the specific purpose for which consent was obtained

• Students and parents have the right to access, correct, and request erasure of their data

• Significant Data Fiduciaries (which can include large edtech platforms) face additional obligations

• Mandatory breach notification to the Data Protection Board of India

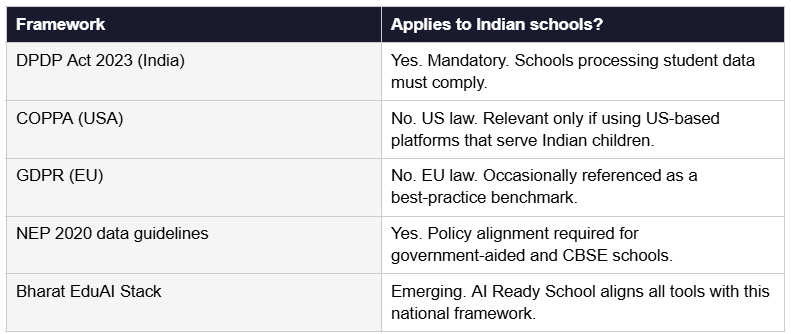

Unlike COPPA (US) or GDPR (EU), the DPDP Act is specifically designed for the Indian context. Schools should not assume that compliance with foreign frameworks is sufficient.

Traditional school software typically stores names, grades, and attendance. AI-powered learning tools go much further. When a student interacts with an AI learning companion like Cypher, the system may capture:

• Learning pace and time-on-task data

• Mistake patterns and concept gaps

• Emotional indicators from engagement data

• Device and location metadata

• Voice or text input in natural language

This richness is what makes AI personalisation powerful. It is also what makes data governance non-negotiable. Without privacy-first architecture, this data can be exploited for profiling, advertising, or identity theft.

When evaluating any AI platform, school administrators and management must verify these five capabilities before deployment:

All student data must be encrypted in transit (TLS 1.2+) and at rest (AES-256 minimum). Zero-knowledge architectures, where even the vendor cannot read student data, represent the gold standard.

Teachers should only see their students. Administrators should see anonymised aggregates by default. No single login should have access to all student data.

The platform should collect only what is necessary for the learning purpose. Any feature that captures data beyond this must be explicitly disclosed.

The consent mechanism must be age-appropriate and verifiable, not just a checkbox. Under the DPDP Act 2023, consent from a child under 18 requires a parent or guardian's involvement.

The vendor must have a documented incident response process and commit to notifying the institution within a defined window. Under the DPDP Act, breach notification to the Data Protection Board of India is mandatory.

The platform of AI Ready School is built on all five of these pillars. The Matrix infrastructure product provides the technical backbone, with private cloud deployment and walled garden architecture that keeps student data within the school's control at all times.

Schools are often presented with platforms that claim GDPR or COPPA compliance as a mark of quality. These are not substitutes for DPDP Act compliance. Always ask vendors specifically about their DPDP Act 2023 readiness.

Most Indian schools do not yet have a formal AI data policy. This is a critical gap. The following framework is designed for school principals, IT coordinators, and management committees to adopt immediately.

Step 1: Conduct a data inventory. List every AI or digital tool currently in use at your school. For each tool, document what data it collects, where it is stored, and who has access.

Step 2: Establish a Data Governance Committee. Include the principal, IT coordinator, a parent representative, and a senior teacher. This committee reviews all new technology adoptions and handles data incidents.

Step 3: Require Data Processing Agreements from all vendors. Any platform processing student data must sign a DPA that prohibits advertising use, defines data retention limits, and commits to DPDP Act compliance.

Step 4: Create a parent communication charter. Publish a plain-language summary of what AI tools your school uses, what data they collect, and how parents can request access or deletion.

Step 5: Train educators annually. Teachers using AI tools like Morpheus must understand what data the tool collects and how to handle incidents. A one-hour annual training session is a minimum baseline.

Privacy protection does not mean limiting the power of AI in education. When implemented correctly, it makes AI more effective by building the trust that allows students to engage authentically.

In schools using Cypher, AI Ready School's personal learning companion, student data is processed within a closed loop. Learning patterns improve personalisation without ever being shared with third parties or advertising networks.

Teachers using Morpheus, the AI teaching agent, get class-level insights through anonymised aggregates. They see which concepts are struggling for the class without identifying individual students by name in the analytics view.

The Zion AI tool suite, used by students for projects and assignments, operates within the school's data perimeter. Student-generated content stays within the institution.

For AI innovation labs and project environments, NEO is deployed with device-level access controls that ensure student experimentation does not create data exposure.

All of this runs on Matrix, the AI infrastructure layer that powers the entire ecosystem within a school's private cloud, meeting the DPDP Act's requirements for data localisation and institutional control.

One of the most persistent misconceptions in education technology is that stronger privacy means weaker performance. The Raipur pilot study directly disproves this.

Schools in Raipur that implemented AI Ready School's complete privacy-first ecosystem measured the following:

• 34% improvement in student learning outcomes

• 57% increase in teacher operational efficiency

• 77% improvement in overall school academic performance

These results were achieved within a framework of full DPDP Act alignment, parental consent workflows, and private cloud deployment. Safety and performance compound each other when the system is designed correctly.

Use this checklist in your next vendor evaluation:

• Are you DPDP Act 2023 compliant? Can you provide documentation?

• Where is student data physically stored? Is it in India?

• Do you use student data to train your AI models?

• Is student data ever shared with advertisers or third parties?

• What is your breach notification timeline?

• Can parents request complete deletion of their child's data?

• Do you have SOC 2 Type II or equivalent certification?

• Will you sign a Data Processing Agreement specific to DPDP Act obligations?

A vendor that cannot answer these questions confidently is not ready for Indian school deployment.

The future of education in India is AI-driven. It must also be safety-first. These two goals are not in tension. They are the same goal.

If your school is ready to implement a complete, DPDP-compliant AI ecosystem, schedule a call with AI Ready School and see how the Raipur results can be replicated in your institution.

Explore AI Ready School: Cypher | Morpheus | Zion | NEO | Matrix | AI Ready School Home