Chiranjeevi Maddala

April 28, 2026

Most schools believe they are more AI-ready than they are. Most consultants advising schools have no consistent framework to measure it. This scorecard changes both. Score your school across five dimensions, interpret your total, and know exactly what to do next.

India's AI curriculum mandate from Class 3 begins in 2026-27. The Ministry of Education has specified that AI and Computational Thinking are not electives but core curriculum requirements. CBSE's expert committee is finalising the framework. NCERT is reviewing the pedagogy. The mandate is real, the timeline is set, and the question every school leader faces right now is the same: are we actually ready?

The honest answer, for most schools, is: partially. Very few schools are comprehensively AI-ready. A significant number have strong infrastructure but no curriculum. A larger number have curriculum aspirations but no infrastructure. Almost every school underestimates the teacher readiness gap — the difference between teachers who have been introduced to AI tools and teachers who can deliver AI education with confidence and competence.

This scorecard was developed from our experience implementing AI across 30+ schools in India and internationally. It reflects what we have learned about the difference between schools that successfully integrate AI into their educational programs and schools that announce AI adoption and then struggle to make it work. The five dimensions — Infrastructure, Teacher Readiness, Student Access, Curriculum Integration, and Data and Analytics — are the dimensions that consistently differentiate successful implementations from unsuccessful ones.

Work through the scorecard honestly. The useful result is not a high score. It is an accurate picture of where your school actually stands, so that the decisions you make about AI investment and implementation are grounded in reality rather than aspiration.

How to use this scorecard: Read each question in each dimension. Award your school the points shown if the condition is fully and genuinely met. Award half points if the condition is partially met. Award zero if it is not yet in place. Be specific. Be honest. The score you produce is only as useful as the accuracy of the self-assessment that generates it.

Total possible score: 100 points across 5 dimensions of 20 points each.

A school that scores itself honestly at 45 and knows exactly why is in a better position than a school that claims 75 and cannot explain what it has built.

DIMENSION 1 · MAX 20 Points POINTS

Infrastructure — Does the School Have the Physical Capacity to Run AI?

Infrastructure is the foundation. Without the right physical and technical foundation, every other dimension is constrained. Infrastructure does not mean having computers. It means having the specific capacity — connectivity, devices, servers, space — to run AI applications reliably for every student.

[ 4 pts ] Does the school have reliable internet connectivity that supports simultaneous AI application use by at least 50% of students? (4 points if yes, 2 points if connectivity is available but frequently unreliable)

[ 4 pts ] Does the school have sufficient devices — computers, tablets, or AI-enabled endpoints — for every student to access AI learning tools without sharing? (4 points if 1:1 or better, 2 points if 2:1, 0 if worse than 2:1)

[ 4 pts ] Does the school have a dedicated physical space — lab, studio, or Innovation Centre — designed and equipped specifically for AI education rather than repurposed from a general computer room? (4 points if yes)

[ 4 pts ] Does the school have local AI server infrastructure — on-campus compute that can run AI models without depending on external cloud connectivity? (4 points if yes, 2 points if partially implemented)

[ 4 pts ] Does the school have a documented IT governance framework that specifies how AI tools are approved, deployed, monitored, and updated? (4 points if documented and active, 2 points if informal)

Infrastructure score: ___ / 20

What your infrastructure score means:

17–20 Excellent

Your physical foundation is strong. Infrastructure is not a constraint on AI adoption. Focus on teacher readiness and curriculum integration.

13–16 Good

Solid infrastructure with identifiable gaps. Likely one or two areas — local servers, dedicated lab space — that need investment before full implementation.

8–12 Developing

Infrastructure is the bottleneck. AI adoption is possible but limited in scope and consistency until the physical foundation is strengthened.

0–7 Early Stage

Significant infrastructure investment required before meaningful AI implementation is possible. Start with device access and connectivity before any curriculum decisions.

Infrastructure note for Tier 2 and 3 cities: schools in lower-connectivity environments should specifically evaluate the local server question (Question 4) as a priority. Matrix, our sovereign AI infrastructure product, eliminates cloud dependency — AI runs at full quality regardless of internet connectivity. A school that installs Matrix can score 4 points on Question 4 regardless of its external connectivity quality.

DIMENSION 2 · MAX 20 Points POINTS

Teacher Readiness — Are Educators Equipped to Teach With and About AI?

Teacher readiness is consistently the most underestimated dimension in AI implementation planning. Schools invest in infrastructure and curriculum and then discover that implementation quality depends entirely on teacher confidence and competence — neither of which is produced by a two-day training session.

The teacher readiness questions below distinguish between three levels that matter enormously in practice: exposure (teachers have seen AI tools), comfort (teachers can use AI tools independently), and pedagogical confidence (teachers can integrate AI into their teaching in ways that improve learning outcomes). Most schools that self-report high teacher readiness have achieved exposure. Genuine readiness requires pedagogical confidence.

[ 4 pts ] Have more than 75% of teaching staff completed structured AI training that goes beyond tool familiarisation to include pedagogical integration — how to use AI to improve learning outcomes, not just how to use AI tools? (4 points if yes, 2 points if 40–75% trained, 0 if less than 40%)

[ 4 pts ] Can teachers independently create, assign, and evaluate AI-assisted learning activities without requiring technical support for routine tasks? (4 points if most teachers are at this level, 2 points if some are, 0 if few or none are)

[ 4 pts ] Does the school have a dedicated AI education coordinator or equivalent role — a staff member with specific responsibility for AI curriculum coordination, teacher support, and implementation quality? (4 points if yes)

[ 4 pts ] Does the school have an ongoing professional development program for AI education that updates teacher knowledge and skills as the field evolves — not a one-time training event? (4 points if structured and ongoing, 2 points if occasional)

[ 4 pts ] Are teachers using AI tools to improve their own professional practice — lesson planning, assessment design, student progress monitoring — not just as a curriculum delivery tool for students? (4 points if widespread, 2 points if some teachers, 0 if rare or absent)

Teacher readiness score: ___ / 20

What your teacher readiness score means:

17–20 Excellent

Teachers are genuine AI educators, not AI tool users. Implementation quality will be high because it rests on confident, pedagogically aware professionals.

13–16 Good

Strong core of capable AI educators with gaps in coverage or ongoing development. Focus on extending capability to all staff and building the ongoing PD infrastructure.

8–12 Developing

Teachers have had exposure but not genuine capability development. Implementation will be inconsistent across classrooms. Structured, ongoing professional development is the immediate priority.

0–7 Early Stage

Teacher readiness is the primary constraint on AI implementation quality. Infrastructure investment without parallel teacher development will produce poor and inconsistent outcomes.

Teacher readiness note: Morpheus, our AI teaching agent, is specifically designed to support teachers who are at the developing stage — it does not require teachers to be AI experts to use it effectively. A teacher who can specify their subject, board, and chapter gets a complete, board-aligned lesson package. The platform meets teachers where they are and builds their confidence through use rather than requiring confidence before use.

DIMENSION 3 · MAX 20 Points POINTS

Student Access — Do Students Have Genuine, Governed AI Learning Experiences?

Student access is not simply about whether students can log into an AI tool. Genuine student access means that every student in the school has regular, structured, supervised AI learning experiences that are age-appropriate, curriculum-aligned, and designed to develop genuine AI literacy rather than AI dependency.

The distinction between access and genuine access matters because most schools that report student AI access have given students the ability to use general-purpose AI tools for homework completion. This is not AI education. It is AI availability. Genuine access requires intentional design: the right tools, the right governance, the right curriculum integration, and the right teacher oversight.

[ 4 pts ] Do all students across all grade levels have access to AI learning tools that are specifically designed for K-12 use — not general-purpose consumer AI tools that happen to be used by students? (4 points if yes for all grades, 2 points if some grades, 0 if general-purpose tools only)

[ 4 pts ] Are student AI interactions supervised and visible to teachers in real time — not a black box that the school cannot monitor or govern? (4 points if full teacher visibility exists, 2 points if partial visibility, 0 if no monitoring)

[ 4 pts ] Does the school have a clear, documented policy on how AI can and cannot be used by students — covering academic integrity, data handling, appropriate content, and responsible use? (4 points if documented and actively enforced, 2 points if informal)

[ 4 pts ] Are student AI interactions personalised to the individual student's curriculum level, learning gaps, and prior knowledge — or do all students receive the same generic AI experience regardless of where they are? (4 points if genuine personalisation, 2 points if some differentiation, 0 if uniform)

[ 4 pts ] Do students develop AI literacy through their school AI experience — understanding how AI works, where it fails, and how to evaluate AI outputs critically — rather than simply becoming more proficient at using AI tools? (4 points if AI literacy is an explicit outcome, 2 points if partially, 0 if not addressed)

Student access score: ___ / 20

What your student access score means:

17–20 Excellent

Students have genuine, governed, personalised AI learning experiences. Access is equitable across grades and designed to build capability, not dependency.

13–16 Good

Solid student access with identifiable gaps — typically in personalisation or AI literacy development. Strong foundation to build on.

8–12 Developing

Students have access to AI tools but the experience is not yet designed well enough to produce genuine AI literacy. Tool availability without pedagogical design.

0–7 Early Stage

Student access is either absent or limited to unmonitored use of general-purpose tools. A structured, governed student AI program needs to be built from the ground up.

Student access note: Cypher and Zion are specifically designed to produce the outcomes this dimension measures. Cypher's questioning-first design builds AI literacy by teaching students to engage critically rather than passively. Zion's five hubs provide structured, age-appropriate AI tool access with full teacher governance. Both are aligned to CBSE, ICSE, and state board curricula — not generic AI access.

DIMENSION 4 · MAX 20 Points POINTS

Curriculum Integration — Is AI Built Into Learning, or Bolted Onto It?

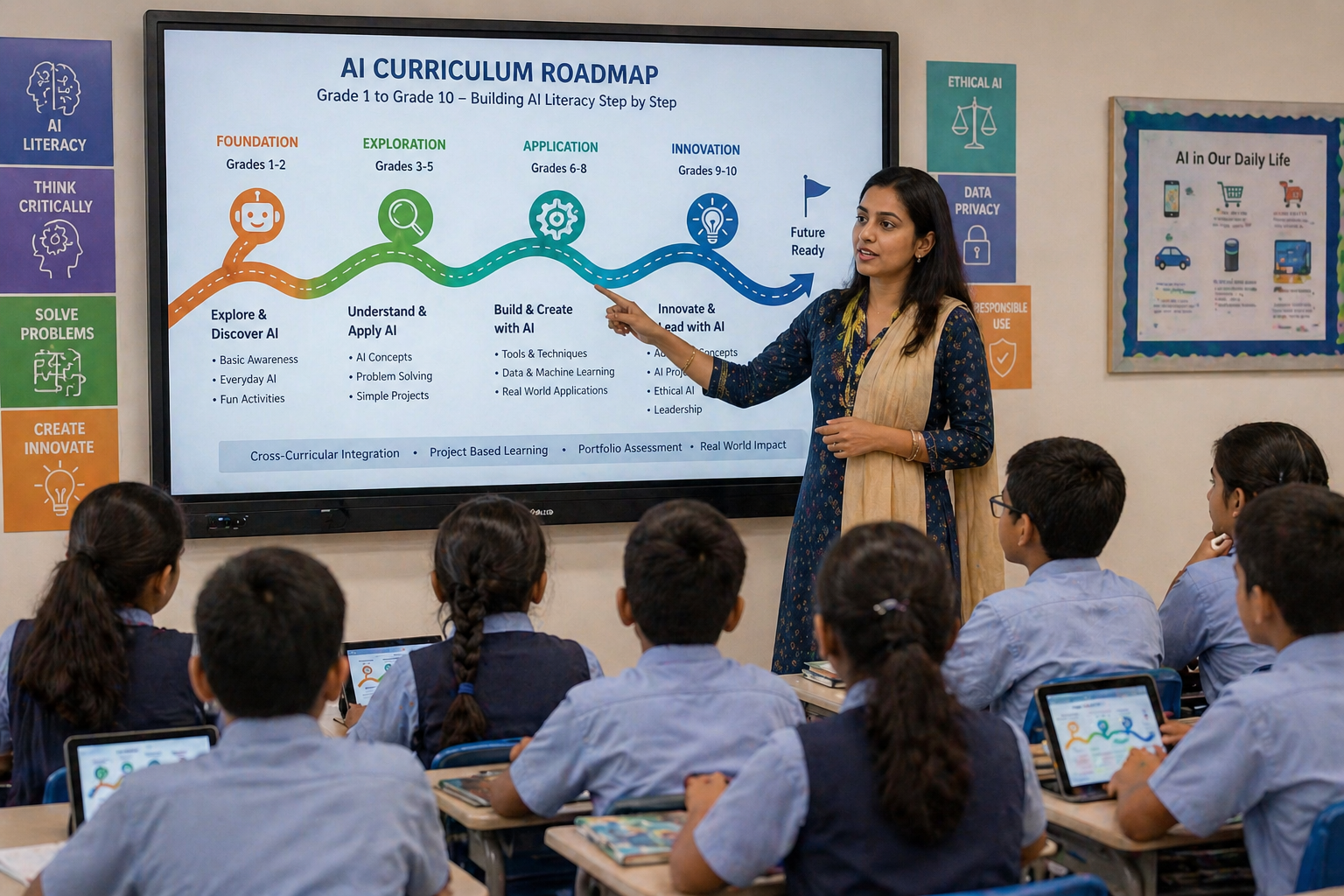

Curriculum integration is the dimension that most clearly separates schools that are using AI from schools that are building AI education. A school that has deployed an AI tool without integrating it into its curriculum structure has changed its technology environment. A school that has embedded AI learning objectives into its curriculum framework, mapped AI tools to specific learning outcomes, and created progression pathways from Grade 1 through Grade 10 has changed its educational program.

The difference is significant and visible. Students in a technology-changed school use AI when given access to it. Students in a curriculum-changed school develop AI literacy systematically across their school years and graduate with documented, portfolio-backed AI capability. The mandate India's government has issued requires the second outcome.

[ 4 pts ] Does the school have a documented AI curriculum that specifies learning objectives, progression pathways, and assessment criteria across grade levels from the primary school through to Class 10? (4 points if yes, 2 points if partially documented, 0 if no formal AI curriculum exists)

[ 4 pts ] Are AI learning objectives mapped to specific NEP 2020 mandates and to the Ministry of Education's AI and Computational Thinking curriculum framework for 2026-27? (4 points if explicitly mapped, 2 points if informally aligned, 0 if no mapping)

[ 4 pts ] Is AI used as a tool for learning in core subjects — Mathematics, Science, Language, Social Studies — not only in a standalone AI or computer class? (4 points if cross-curricular integration is systematic, 2 points if in some subjects, 0 if AI is silo-ed in one class)

[ 4 pts ] Does the school have a structured program for AI innovation, research, or competition that gives students the opportunity to demonstrate AI capability through original work — not just tool use? (4 points if a formal program exists, 2 points if informal, 0 if absent)

[ 4 pts ] Are student AI learning outcomes assessed through methods that evaluate genuine capability — portfolios, projects, research, demonstration — rather than only through traditional examination formats? (4 points if portfolio/project assessment is systematic, 2 points if partially, 0 if examination-only)

Curriculum integration score: ___ / 20

What your curriculum integration score means:

17–20 Excellent

AI is genuinely integrated into the school's educational program with documented objectives, cross-curricular presence, and authentic assessment. This is what NEP 2020 compliance looks like.

13–16 Good

Strong curriculum integration with gaps in either cross-curricular coverage or authentic assessment. The framework exists; full implementation across all subjects and grades needs attention.

8–12 Developing

AI curriculum exists as a concept but has not been operationalised into the school's full educational program. Likely a standalone AI class without cross-curricular integration.

0–7 Early Stage

No systematic AI curriculum exists. AI tool use is present but unstructured. Building a genuine AI curriculum is the foundational priority before any other integration work.

Curriculum integration note: The NEO AI Innovation Lab curriculum is designed to close the gap between Developing and Excellent on this dimension. Its four-level, Grades 1-10 framework with specific learning objectives, cross-domain projects, competition pathways, and portfolio-based assessment is the most complete AI curriculum available for Indian K-12 schools. Schools that install NEO with its full curriculum framework can move from Early Stage to Good on this dimension in a single academic year.

DIMENSION 5 · MAX 20 Points POINTS

Data and Analytics — Can the School See What Is Actually Happening?

Data and analytics is the dimension most schools undervalue until they try to evaluate whether their AI implementation is working. Without data, AI adoption is an act of faith. With data, it is a management decision that can be evaluated, adjusted, and improved based on evidence.

The questions in this dimension assess whether the school has the infrastructure to know — not guess, not assume, not hope — what is happening in student learning as a result of AI implementation. This means real-time data, concept-level granularity, teacher-accessible dashboards, and the institutional capacity to make decisions based on what the data shows.

[ 4 pts ] Do teachers have access to real-time dashboards that show individual student learning progress at the concept level — not just assignment completion rates or end-of-term scores? (4 points if real-time and concept-level, 2 points if periodic or aggregate, 0 if absent)

[ 4 pts ] Can school management access school-wide learning analytics that show curriculum effectiveness, teacher support needs, and student outcome trends — updated frequently enough to inform decisions before problems compound? (4 points if live management dashboard exists, 2 points if periodic reports, 0 if absent)

[ 4 pts ] Does the school track student progress across multiple dimensions — knowledge, skills, cognitive behaviour, and learning style — rather than only through examination performance? (4 points if multi-dimensional tracking, 2 points if some dimensions beyond exams, 0 if exam-only)

[ 4 pts ] Does the school have clear data governance — knowing what student data the AI platform collects, where it is stored, who has access, and how it can be deleted — with documented compliance with India's DPDP Act 2023? (4 points if fully documented and compliant, 2 points if partial, 0 if unaddressed)

[ 4 pts ] Has the school measured the learning outcome impact of its AI implementation — with before-and-after data that shows specifically how student performance changed as a result of AI adoption? (4 points if measured and documented, 2 points if partially measured, 0 if no measurement)

Data and analytics score: ___ / 20

What your data and analytics score means:

17–20 Excellent

The school can see its learning outcomes in real time and make evidence-based decisions. AI implementation is governed by data, not assumption. This is the standard every school should reach.

13–16 Good

Strong data capability with gaps — typically in multi-dimensional tracking or DPDP compliance documentation. The foundation is solid; close the specific gaps identified.

8–12 Developing

Some data exists but it is not rich enough, timely enough, or accessible enough to genuinely inform AI implementation decisions. The school is making decisions with limited visibility.

0–7 Early Stage

The school has little visibility into what its AI implementation is producing. Without data, it is impossible to evaluate whether the investment is working or to justify it to parents, trustees, or regulators.

Data and analytics note: The Morpheus monitoring dashboard and Cypher's 360-degree student profile directly address the first three questions in this dimension. The Matrix infrastructure addresses Question 4 — student data stays on school infrastructure, giving the school complete governance and DPDP Act compliance capability. The Raipur implementation provides the documented before-and-after learning outcome evidence that Question 5 requires.

Your total score tells you where you stand. Your dimension profile tells you what to do. The two dimensions with the lowest scores are the highest priority, regardless of your total.

If Infrastructure is your lowest score: No other dimension can be fully realised without the physical foundation. Device access and connectivity are the first investments. For schools in lower-connectivity environments, Matrix local server infrastructure is the most important single investment — it eliminates the cloud dependency that makes every other dimension fragile.

If Teacher Readiness is your lowest score: This is the most common gap and the most consequential for implementation quality. Infrastructure without teacher capability produces expensive equipment that underperforms. Structured ongoing professional development, not one-time training, is the intervention. Morpheus is specifically designed to support teachers at the developing stage — it meets teachers where they are.

If Student Access is your lowest score: The governance and pedagogical design of student AI experiences needs urgent attention. General-purpose AI tools used by students without monitoring, curriculum alignment, or safety design are not an asset. They are a liability. Replacing them with purpose-built K-12 tools that include teacher visibility and curriculum alignment is the priority.

If Curriculum Integration is your lowest score: AI adoption without curriculum integration produces tool use, not AI education. Building a formal AI curriculum — with grade-by-grade objectives, cross-curricular integration, and portfolio assessment — is the structural change that converts AI tool access into genuine AI literacy. NEO's four-level curriculum framework is the fastest path to closing this gap.

If Data and Analytics is your lowest score: The school is making AI investment decisions without the visibility to evaluate whether those decisions are working. Real-time teacher dashboards and management-level analytics are not luxuries. They are the governance infrastructure that allows AI implementation to be managed responsibly rather than hoped for optimistically.

For AI Leaders (85–100): Document your implementation model in a format that can be shared. Consider applying to be an AI Ready School partner school showcase. Engage with CBSE and state board networks to share what genuine AI implementation looks like. The schools in your range are the reference points the sector needs, and most of them are not yet visible.

For AI Ready schools (70–84): Identify your weakest dimension and build a specific 6-month improvement plan for it. Share your current implementation with parents and trustees — a school at this level has a genuinely impressive story that most parents do not fully know. Request a Morpheus dashboard demo to see whether your data and analytics infrastructure matches what is available.

For AI Developing schools (55–69): Commission a specific gap analysis for your two lowest-scoring dimensions and build an 18-month implementation roadmap. The 2026-27 mandate is achievable from this starting point with deliberate planning. A site visit to an AI Leader school in your region is one of the highest-value investments you can make at this stage.

For AI Beginning schools (40–54): Focus on the three foundational dimensions: Infrastructure, Teacher Readiness, and Student Access. Curriculum Integration and Data and Analytics cannot be built effectively without these three. A structured implementation partner — not a tool vendor, but an implementation partner who stays through the process — is essential at this stage.

For AI Starting schools (0–39): Begin with an honest governance decision: who in the school leadership is responsible for AI implementation, what budget is allocated to it, and what the 12-month milestones look like. AI implementation without internal ownership and accountability produces the same result as any other initiative without ownership: intention without outcome. Name the owner. Set the milestones. Begin.

The score that matters is not the one you report to your board. It is the one you calculate honestly in a room with the people who will have to implement what you decide.

The most valuable use of this scorecard is not as a solo exercise. It is as a structured conversation tool for school leadership teams. Have your principal, IT coordinator, academic director, and one or two senior teachers each complete the scorecard independently, then compare results.

Disagreements between scorers are diagnostic. If your principal scores Infrastructure at 16 and your IT coordinator scores it at 8, the gap reveals something important: either the principal does not know what the IT coordinator knows about the actual state of the infrastructure, or the IT coordinator is applying a higher standard than the principal realises the policy requires. Either way, the conversation that the disagreement forces is more valuable than any single score.

Use the scorecard annually. An AI readiness score from April 2026 compared to an AI readiness score from April 2027 tells you specifically whether your implementation investments are producing the gains you intended. It makes accountability concrete: not "we feel we are making progress" but "we moved from 52 to 68 and here is which dimension drove most of the gain."

The full interactive version of this assessment, which includes scoring guidance, benchmark comparisons against schools at similar resource levels, and personalised next-step recommendations based on your dimension profile, is available through AI Ready School. It is the version of this tool designed for the school leader who wants not just a score but a roadmap.

India's 2026-27 AI curriculum mandate is the most significant education policy development of this decade. The schools that prepare for it seriously, by understanding honestly where they stand and building deliberately toward where they need to be, are the schools whose students will benefit most from what AI-enabled education can produce. The schools that treat it as a compliance exercise will produce compliance, not education.

The scorecard is the beginning of a serious preparation. Take it with your team. Use what it shows you. The honest number is the useful number.

To take the full interactive AI Readiness Assessment and receive your personalised school report, visit aireadyschool.com or contact our implementation team.

AI Ready School provides a complete AI ecosystem for K-12 schools, including Cypher (personalised AI learning companion), Morpheus (AI teaching agent), Zion (safe AI tool suite), NEO (AI Innovation Labs), and Matrix (sovereign AI infrastructure). Helping schools move from AI Starting to AI Leader.

To discuss your scorecard results with our team: hey@aireadyschool.com or +91 9100013885.