Chiranjeevi Maddala

May 13, 2026

Every school leader who has sat across from an AI vendor has heard the same pitch: transformational outcomes, future-ready students, competitive differentiation. What they rarely hear is a clear, honest answer to the question that actually determines whether AI adoption happens: what does this cost, what does it save, and what does the return look like at our school's specific size and budget? This blog gives you that answer.

The conversation about AI in schools has been dominated by philosophy and pedagogy. Both matter. But the decision that school finance managers, trustees, and chain operators actually face is an economic one. Resources are finite. Every rupee spent on AI is a rupee not spent on something else. The burden of proof for AI investment is the same as the burden of proof for any significant capital or operational expenditure: demonstrate that the return, measured in ways that the institution values, justifies the cost.

We have now implemented AI across 30+ schools in India and internationally, across government schools with constrained budgets and premium private schools with ambitious investment capacity. We have enough data to give school leaders the honest economic picture that most AI vendors avoid providing. Not because the picture is unflattering — it is not — but because honest economic analysis requires specificity that generic benefit claims do not provide.

This blog presents a transparent cost-benefit framework for AI in schools. We apply it at three school sizes. We include calculations that school finance managers can verify against their own cost data. And we are explicit about where the numbers are uncertain and where they are well-established.

The question is not whether AI is worth it. The question is whether you know specifically what it is worth at your school.

Before calculating return, you need an accurate picture of the cost. AI costs in schools fall into four categories, and most schools that have had bad experiences with AI adoption have underestimated one or more of them.

Platform subscription is the most visible cost and the one most commonly discussed. For AI Ready School, the subscription covers access to the complete ecosystem: Cypher for students, Morpheus for teachers, Zion for the full tool suite, and NEO for innovation lab access. Platform costs scale with school size and are structured per-student or per-institution depending on implementation scope.

For a realistic comparison, consider what schools are currently paying for the fragmented AI tools they are already using. Across our partner schools, we find that the average school actively using AI tools is running 8 to 12 separate subscriptions — AI writing tools, image generators, quiz platforms, coding environments, research tools — most of which the IT team did not procure and none of which connect to each other. When these subscriptions are aggregated, the total monthly spend frequently exceeds what a single integrated platform costs. The consolidation benefit alone often justifies the platform cost before any outcome improvement is calculated.

For schools in well-connected urban environments, infrastructure costs are minimal. The AI Ready School platform runs on standard school devices and network infrastructure that most schools already have. No specialised hardware is required for cloud deployment.

For schools in lower-connectivity environments or schools with data sovereignty requirements, Matrix — our on-premises AI infrastructure product — involves upfront hardware investment. This investment is significant but one-time, and it eliminates cloud subscription costs for AI processing while providing DPDP Act compliance that cloud deployment cannot structurally guarantee. For government schools and schools in Tier 2 and 3 cities, the Matrix investment typically amortises over three to four years against connectivity costs avoided and compliance risk eliminated.

Implementation and training are the costs that most AI vendors understate and most schools underestimate. A platform that teachers cannot use confidently is not a platform — it is expensive shelf furniture. Genuine AI implementation requires a structured onboarding process, an identified internal champion, a professional development program that extends beyond the initial training event, and ongoing support for the teacher questions that arise only when implementation is actually happening.

AI Ready School's implementation model includes structured onboarding, train-the-trainer support for the internal champion cohort, and ongoing professional development resources. These are included in the implementation agreement rather than priced separately, because we have learned from partner school experience that implementations that cut corners on teacher development fail. The cost of a failed implementation — sunk subscription fees, teacher frustration, student disruption, and the reputational cost of announcing an AI initiative that did not work — is substantially higher than the cost of doing implementation properly.

The opportunity cost of AI adoption is the management attention, IT resources, and institutional energy that implementation requires. For a school that has never implemented technology at this scale, implementation is a significant project with real demands on leadership time. This cost is real and should be planned for. Schools that treat AI implementation as a minor IT project rather than a significant institutional initiative consistently underperform on outcomes relative to schools that treat it as the strategic priority it is.

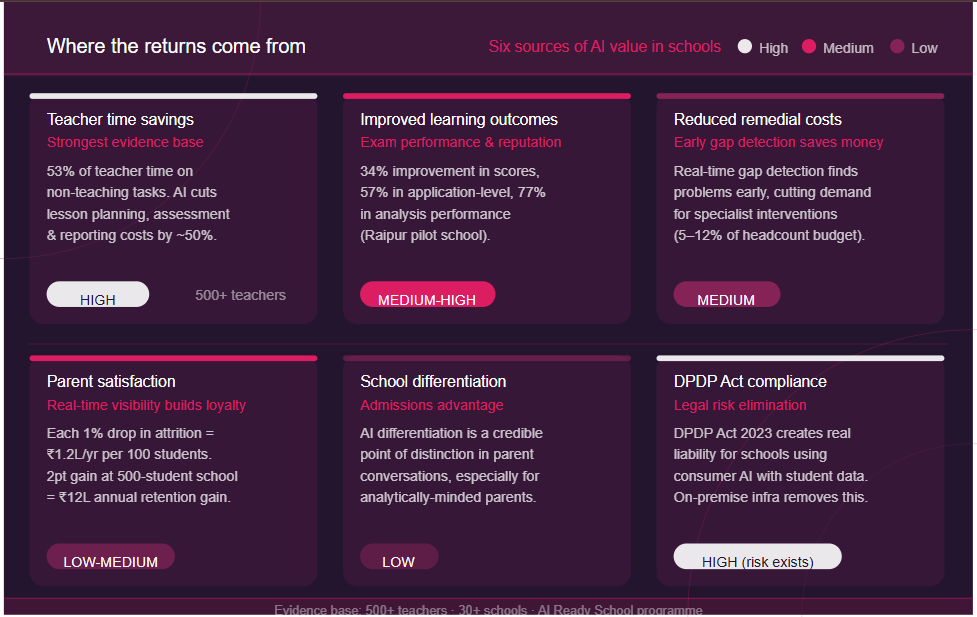

The returns from AI in schools come from six sources. Each has a different time horizon, a different level of certainty, and a different magnitude depending on school context. We present each with honest assessments of certainty and magnitude.

This is the most immediately quantifiable return and the one with the strongest evidence base. The average Indian school teacher spends 53% of their working week on non-teaching tasks. Morpheus reduces the time cost of the most time-consuming of these tasks — lesson planning, assessment creation, and progress reporting — by a documented average of 50%.

Converting this to financial terms requires knowing the cost of teacher time. For a school with an average teacher salary of ₹40,000 per month, the weekly cost of non-teaching time per teacher is approximately ₹5,000. A 50% reduction in the time cost of the three tasks Morpheus addresses most directly (lesson planning, assessment creation, evaluation) represents a saving of roughly ₹2,000 to ₹2,500 per teacher per month in the opportunity cost of professional time.

This is not a cash saving in the sense that the school does not pay teachers less. It is a reallocation of professional capacity: teachers spend 8 to 10 hours less per week on mechanical tasks and more hours on the relational, pedagogical, and student-facing work that produces better outcomes. The financial representation of this reallocation is in the productivity of teacher time, not in salary reduction.

However, there are contexts where teacher time savings do translate to direct cost reduction. For schools that currently pay for content creation services — curriculum specialists, external exam paper writers, progress report writers — AI can reduce or eliminate these expenditures directly.

Certainty: High. Evidence base: 500+ teachers across 30+ schools.

The learning outcome improvements documented in AI Ready School implementations translate to financial returns through two mechanisms: reduced remedial coaching requirements and improved examination performance.

The Raipur B.P. Pujari Government School implementation produced a 34% improvement in final class scores, a 57% improvement in application-level performance, and a 77% improvement in analysis-level performance. Even a fraction of this improvement, applied to the student population of a typical private school, produces measurable reduction in the demand for remedial support and measurable improvement in board examination performance.

For private schools in competitive markets, examination performance is directly linked to school reputation and admissions demand. A school that can document consistent improvement in board examination results as a consequence of AI implementation has a marketing asset that commands premium on fee structures and preference in parent choice. The financial value of this asset is school-specific but can be substantial in competitive urban markets where parent fee sensitivity is inversely related to demonstrated academic outcomes.

Certainty: Medium-high for learning improvement. Medium for financial translation, which depends heavily on market position.

Schools that provide internal remedial support — additional classes, support teachers, specialist interventions — currently fund these programs to address gaps that traditional assessment detects too late. Cypher's real-time gap detection, combined with Morpheus's three-tier alert system, identifies learning gaps when they are small and addressable by the class teacher, rather than when they have compounded to the point where specialist intervention is required.

The economic implication is a reduction in the demand for the most expensive interventions while increasing the effectiveness of early-stage teacher responses. Schools with structured remedial programs typically spend between 5% and 12% of their teacher headcount budget on remedial and support staffing. Documented reduction in the proportion of students requiring intensive remedial support translates directly to this cost line.

Certainty: Medium. Varies significantly by school demographics and existing remedial program scale.

Parent satisfaction has a direct financial translation in schools that operate in competitive markets: it determines fee payment continuation, referral rates, and resistance to fee increases. A parent who has real-time visibility into their child's learning journey — who can see specific knowledge gains, engagement patterns, and skill development rather than waiting for a report card four times a year — is a parent with a substantially different relationship to the school.

The financial value of parent retention is straightforward to calculate. If a school charges ₹1,20,000 per year in fees and has an annual attrition rate of 12%, each percentage point reduction in attrition represents ₹1,20,000 in retained annual revenue per 100 students. For a school of 500 students, a two percentage point reduction in attrition is worth ₹12,00,000 per year.

We do not have controlled data on AI-driven attrition reduction, so we present this as a directional benefit rather than a documented one. What we do have is consistent qualitative evidence from partner schools that parent satisfaction scores improve significantly after AI Ready School implementation, driven primarily by the visibility that Morpheus and Cypher provide into children's daily learning.

Certainty: Low-medium for quantification. High for directional benefit.

In the Indian private school market, differentiation is increasingly valuable and increasingly difficult to achieve on academic outcomes alone, which are similar across schools in the same tier. Technology differentiation — specifically, AI differentiation done right — is a credible point of distinction in parent conversations and school choice decisions.

The admissions value of genuine AI implementation is difficult to isolate from other factors, but schools that have implemented AI Ready School report it as a significant factor in parent inquiries and admissions conversations, particularly from the technology-aware, analytically sophisticated parent segments that tend to be the most desirable from a school development perspective.

Certainty: Low for quantification. Real as a market dynamic.

This is a cost avoidance return rather than a revenue return, and it is particularly relevant for schools with Matrix infrastructure. India's Digital Personal Data Protection Act 2023 creates genuine legal liability for schools that process children's data through cloud AI tools without appropriate safeguards. The cost of a data breach or regulatory action under DPDP is not easily quantifiable in advance — it involves legal costs, regulatory penalties, and reputational damage — but it is real, and schools using consumer AI tools for student data processing are exposed to it.

Matrix eliminates this exposure structurally. The financial value of this elimination is the expected value of the DPDP risk that is removed — which is a function of the probability of a regulatory event multiplied by the expected cost of that event. For schools with large student populations and sophisticated parent communities, this calculation can be significant.

Certainty: High that the risk exists. Speculative for quantification of the expected cost.

We now apply this framework to three representative school sizes. All figures are illustrative and based on typical costs and documented outcomes. Every school's calculation will differ based on its specific cost structure, market position, and implementation scope.

Annual platform cost: ₹18,00,000 to ₹24,00,000 (estimated range for full ecosystem)

Teacher time savings: 40 teachers × ₹2,200 per month opportunity cost saved × 12 months = ₹10,56,000 per year in redeployed professional capacity. If this redeployment reduces reliance on one external curriculum specialist position at ₹4,00,000 per year, direct cash saving = ₹4,00,000.

Reduced remedial coaching: Assuming the school currently funds two support teacher positions at ₹3,50,000 each = ₹7,00,000 per year. If Cypher's early gap detection reduces the demand for one of these positions over two years, annualised saving = ₹3,50,000.

Parent retention improvement: 500 students × ₹1,20,000 average annual fee × 2% attrition reduction = ₹12,00,000 per year in retained revenue.

Admissions differentiation (conservative estimate): If AI differentiation drives 10 additional admissions per year at ₹1,20,000 = ₹12,00,000 incremental revenue.

Total documented and directional annual return: ₹31,06,000 to ₹35,00,000 Annual platform investment: ₹18,00,000 to ₹24,00,000 Net annual return: ₹7,00,000 to ₹17,00,000 ROI range: 29% to 94% in Year 1, improving in Years 2 and 3 as implementation matures

Annual platform cost: ₹40,00,000 to ₹55,00,000

Teacher time savings: 100 teachers × ₹2,200 × 12 = ₹26,40,000 in redeployed capacity. Direct cash saving from reduced external content creation: ₹8,00,000.

Reduced remedial coaching: 5 support teacher positions at ₹3,50,000 each = ₹17,50,000 total. Conservative reduction of 1.5 positions over two years = ₹5,25,000 annualised.

Parent retention improvement: 1,500 students × ₹1,50,000 average fee × 2% attrition reduction = ₹45,00,000.

Admissions differentiation: 25 additional admissions × ₹1,50,000 = ₹37,50,000.

Total documented and directional annual return: ₹1,21,15,000 Annual platform investment: ₹40,00,000 to ₹55,00,000 Net annual return: ₹66,15,000 to ₹81,15,000 ROI range: 120% to 203% in Year 1

Annual platform cost: ₹1,20,00,000 to ₹1,60,00,000 (network pricing)

Teacher time savings: 300 teachers × ₹2,200 × 12 = ₹79,20,000. Direct content creation savings: ₹20,00,000.

Reduced remedial coaching: Conservative 4 positions eliminated network-wide = ₹14,00,000.

Parent retention improvement: 4,000 students × ₹1,40,000 average fee × 2% = ₹1,12,00,000.

Admissions differentiation: 60 additional admissions network-wide × ₹1,40,000 = ₹84,00,000.

Network management efficiency: Centralised analytics replacing manual performance reporting across 5 campuses, estimated value in management time: ₹15,00,000.

Total documented and directional annual return: ₹3,24,20,000 Annual platform investment: ₹1,20,00,000 to ₹1,60,00,000 Net annual return: ₹1,64,20,000 to ₹2,04,20,000 ROI range: 137% to 170% in Year 1

Honest economic analysis requires acknowledging what the numbers do not capture, as clearly as what they do.

The calculations above do not capture the compound improvement effect. An implementation that produces a 34% learning outcome improvement in Year 1 does not maintain exactly that improvement in Year 2 while everything else stays the same. The improvement compounds: students who gained stronger foundational understanding in Year 1 build on that foundation in Year 2. Teachers who developed Morpheus competency in Year 1 are more effective users in Year 2. The ROI figures above are conservative in the sense that they calculate Year 1 returns on Year 1 investments without modelling the compounding that experienced partner schools consistently report.

The calculations also do not capture the cost of inaction. A school that does not implement AI in the 2026-27 academic year is not maintaining its current position. It is falling behind schools that do implement. In a competitive private school market, the admissions and retention consequences of being perceived as technologically behind are real costs that accrue to non-adopters. The question for trustees is not whether AI investment produces returns in isolation. It is whether AI investment produces returns relative to the alternative of not investing, which has its own cost trajectory.

Finally, the calculations do not capture the student outcome value that cannot easily be monetised. A student who develops genuine AI-Sense through Cypher and NEO is better prepared for a labour market that prices AI capability at a documented 43% wage premium. A student who enters university with a portfolio from the NEO AI Innovation Lab is in a categorically different admissions position from a student who enters with only examination scores. These are real returns to the student and to the families who chose the school. They do not appear in the school's financial statements, but they are the reason that families choose schools and the reason that schools build reputations. They are the most important returns of all.

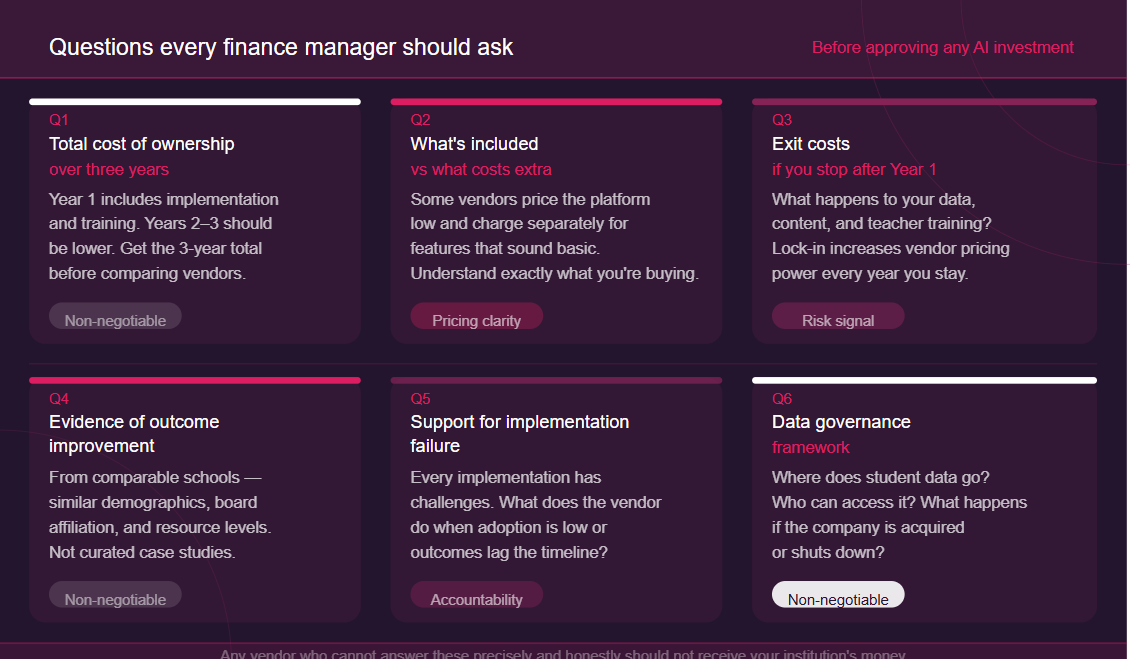

Before approving any AI investment, school finance managers should have clear answers to these questions. Any AI vendor who cannot answer them precisely and honestly should not receive your institution's money.

What is the total cost of ownership over three years? Year 1 costs include implementation and training. Years 2 and 3 should be lower. Get the three-year number before comparing vendors.

What is included in the subscription and what costs extra? Some vendors price the platform at one level and charge separately for features that sound basic. Understand exactly what you are buying.

What are the exit costs? If you decide to stop using the platform after Year 1, what happens to your data, your content, and your teacher training investment? A platform you cannot leave without significant loss is a platform whose pricing power increases every year you stay.

What is the evidence of learning outcome improvement in comparable schools? Not case studies from dissimilar contexts. Evidence from schools with similar demographics, similar board affiliations, and similar resource levels to yours.

How does the vendor support implementation failure? Every implementation has challenges. What does the vendor do when adoption is lower than expected or outcomes are not appearing on the projected timeline?

What is the data governance framework? Where does student data go, who can access it, and what happens to it if the company is acquired or shuts down?

AI investment in schools is justifiable on economic grounds for almost every school size and context. The returns — teacher time redeployment, outcome improvement, reduced remedial spending, parent retention, admissions differentiation — consistently exceed the investment for schools that implement seriously and measure outcomes honestly.

The qualification is implementation quality. The economic case for AI in schools depends entirely on implementation that produces the outcomes the returns are calculated against. A platform that is purchased and underused does not produce teacher time savings or learning outcome improvements. It produces a subscription cost with no return. The schools that achieve the ROI figures in this analysis are the schools that treated implementation as the strategic priority it is, identified internal champions, committed to ongoing professional development, and measured outcomes from day one.

The economics of AI in schools are sound. The economics of AI purchases in schools are not. The difference is implementation.

The question your trustees should be asking is not whether AI investment makes economic sense. The evidence is clear that it does. The question is whether your school is ready to implement it in the way that makes the economic case real.

Every school's cost structure, market position, and implementation context is different. The ROI calculations above are illustrative frameworks. A custom pricing proposal from AI Ready School will give your finance manager and trustees the specific numbers for your school's size, board affiliation, infrastructure environment, and implementation scope.

AI Ready School provides a complete AI ecosystem for K-12 schools including Cypher (personalised learning companion), Morpheus (AI teaching agent), Zion (safe AI tool suite), NEO (AI Innovation Labs), and Matrix (sovereign AI infrastructure). Pricing is transparent, implementation support is included, and the economic case is documented.

To get a custom pricing proposal for your school or network, reach out at hey@aireadyschool.com or call +91 9100013885.